Data Security on Mobile Devices: Current State of the Art, Open Problems, and Proposed Solutions

Executive Summary

In this work we present definitive evidence, analysis, and (where needed) speculation to answer the questions, “Which concrete security measures in mobile devices meaningfully prevent unauthorized access to user data?” “In what ways are modern mobile devices accessed by unauthorized parties?” and finally, “How can we improve modern mobile devices to prevent unauthorized access?”

We examine the two major platforms in the mobile space, iOS and Android, and for each we provide a thorough investigation of existing and historical security features, evidence-based discussion of known security bypass techniques, and concrete recommendations for remediation. In iOS we find a compelling set of security and privacy controls, empowered by strong encryption, and yet a critical lack in coverage due to under-utilization of these tools leading to serious privacy and security concerns. In Android we find strong protections emerging in the very latest flagship devices, but simultaneously fragmented and inconsistent security and privacy controls, not least due to disconnects between Google and Android phone manufacturers, the deeply lagging rate of Android updates reaching devices, and various software architectural considerations. We also find in both platforms exacerbating factors due to increased synchronization of data with cloud services.

The markets for exploits and forensic software tools which target these platforms are alive and well. We aggregate and analyze public records, documentation, articles, and blog postings to categorize and discuss unauthorized bypass of security features by hackers and law enforcement alike. Motivated by an accelerating number of cases since Apple v. FBI in 2016, we analyze the impact of forensic tools, and the privacy risks involved in unchecked seizure and search. Then, we provide in-depth analysis of the data potentially accessed via law enforcement methodologies from both mobile devices and associated cloud services.

Our fact-gathering and analysis allow us to make a number recommendations for improving data security on these devices. In both iOS and Android we propose concrete improvements which mitigate or entirely address many concerns we raise, and provide analysis towards resolving the remainder. The mitigations we propose can be largely summarized as increasing coverage of sensitive data via strong encryption, but we detail various challenges and approaches towards this goal and others.

It is our hope that this work stimulates mobile device development and research towards security and privacy, provides a unique reference of information, and acts as an evidence-based argument for the importance of reliable encryption to privacy, which we believe is both a human right and integral to a functioning democracy.

Contents

- 1 Introduction

- 2 Technical Background

- 3 Apple iOS

- 4 Android

- 5 Conclusion

- A History of iOS Security Features

- B History of Android Security Features

- C History of Forensic Tools

List of Figures

- 2.1 List of Targets for NIST Mobile Device Acquisition Forensics

- 3.1 iPhone Passcode Setup Interface

- 3.2 Apple Code Signing Process Documentation

- 3.3 iOS Data Protection Key Hierarchy. Each arrow

- 3.4 List of iOS Data Protection Classes

- 3.5 List of Data Included in iCloud Backup

- 3.6 List of iCloud Data Accessible by Apple

- 3.7 List of iCloud Data Encrypted “End-to-End”

- 3.8 Secure Enclave Processor Key Derivation

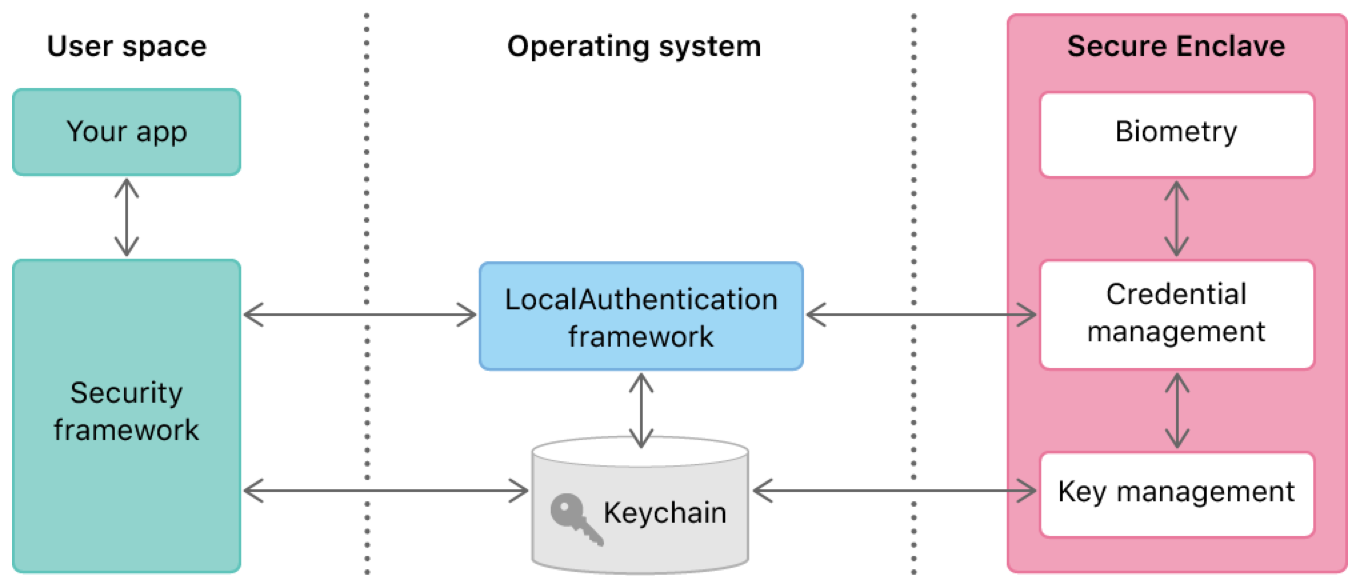

- 3.9 LocalAuthentication Interface for TouchID/FaceID

- 3.10 Apple Documentation on Legal Requests for Data

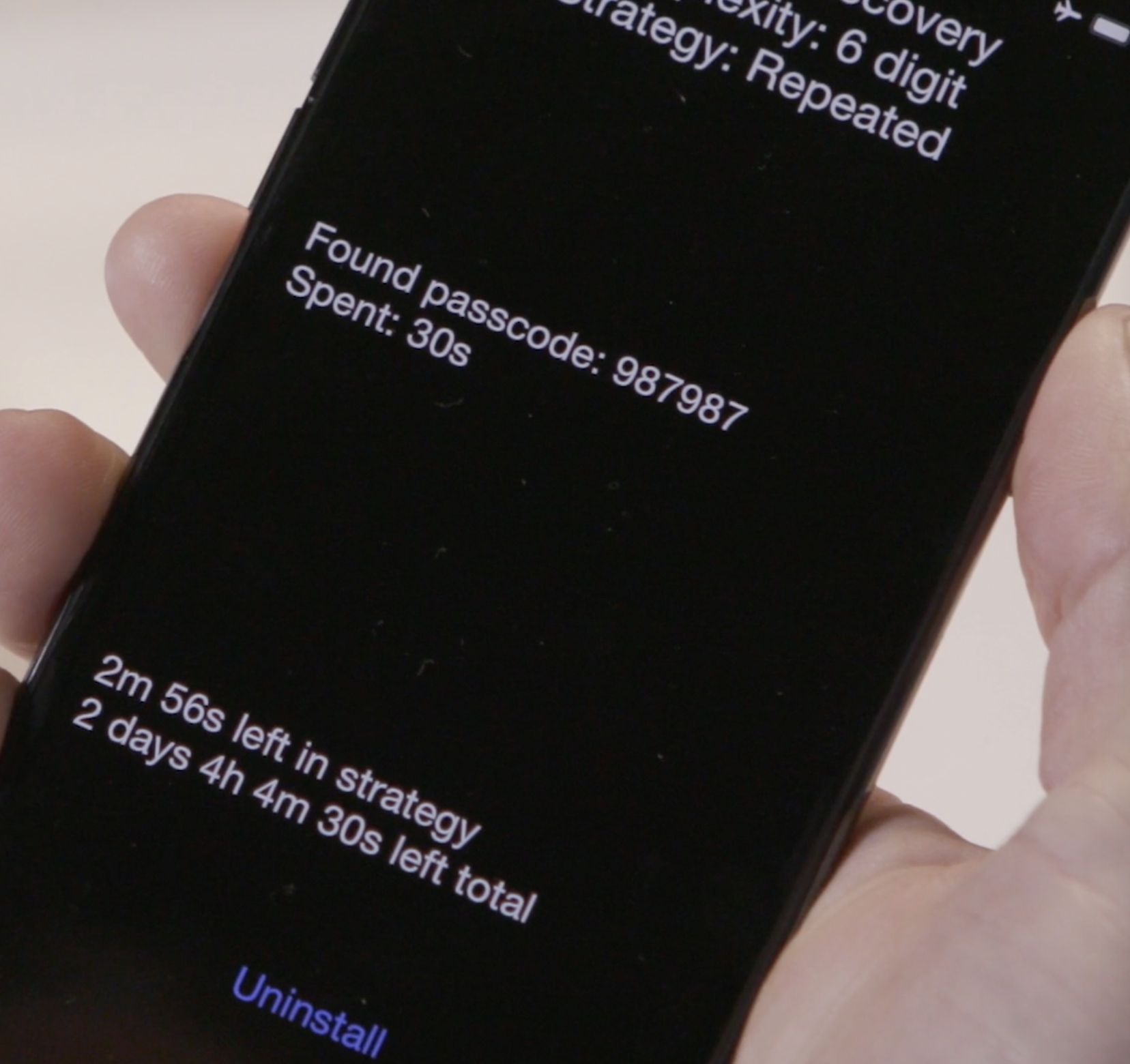

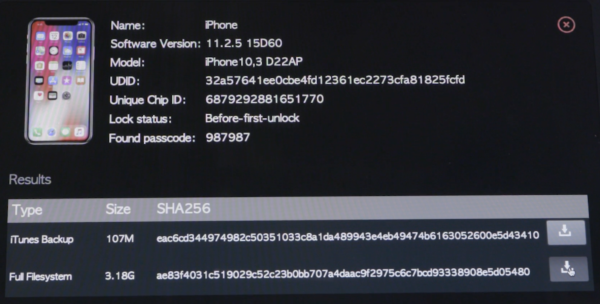

- 3.11 Alleged Leaked Images of the GrayKey Passcode Guessing Interface

- 3.12 List of Data Categories Obtainable via Device Forensic Software

- 3.13 Cellebrite UFED Touch 2

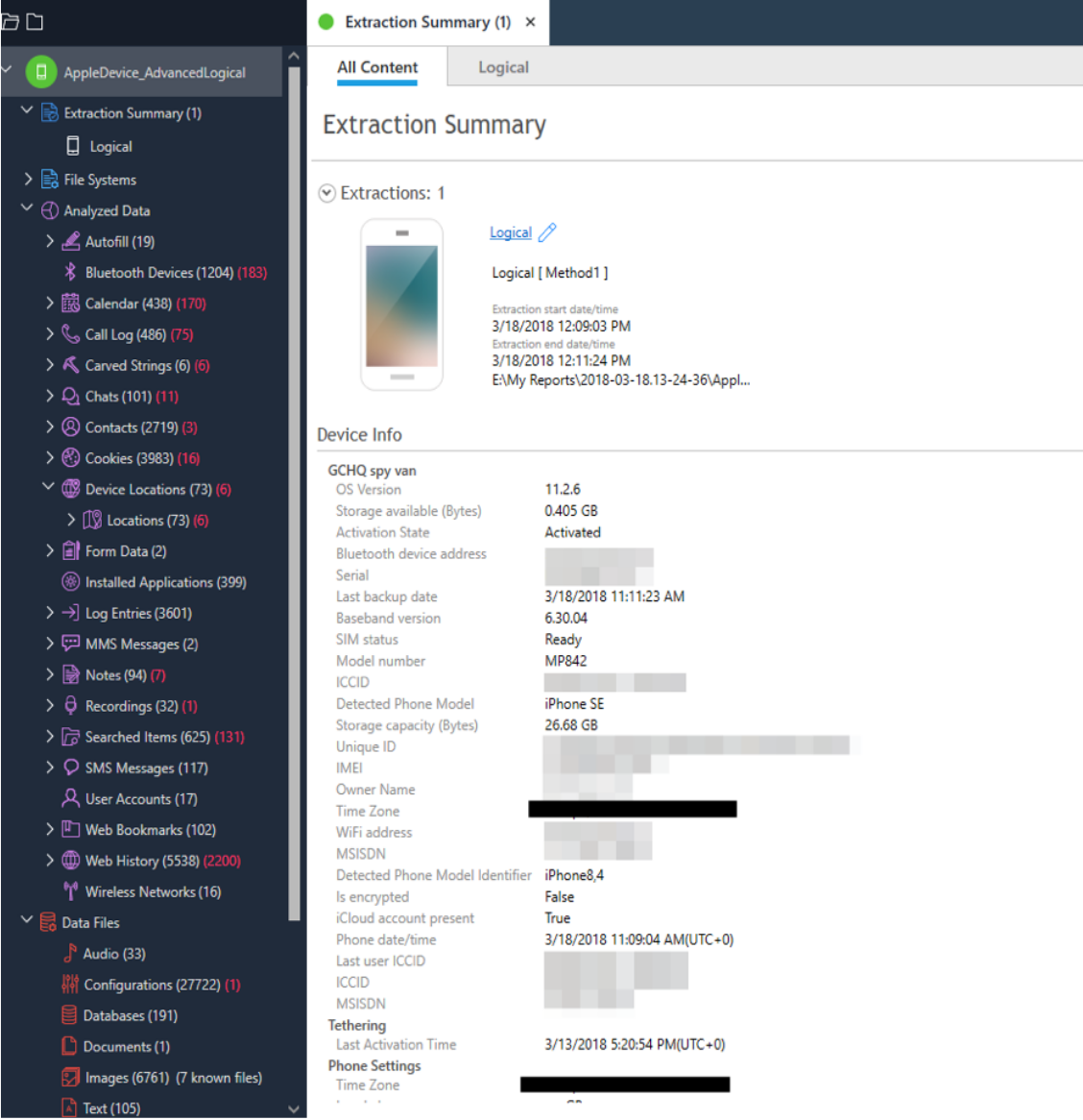

- 3.14 Cellebrite UFED Interface During Extraction of an iPhone

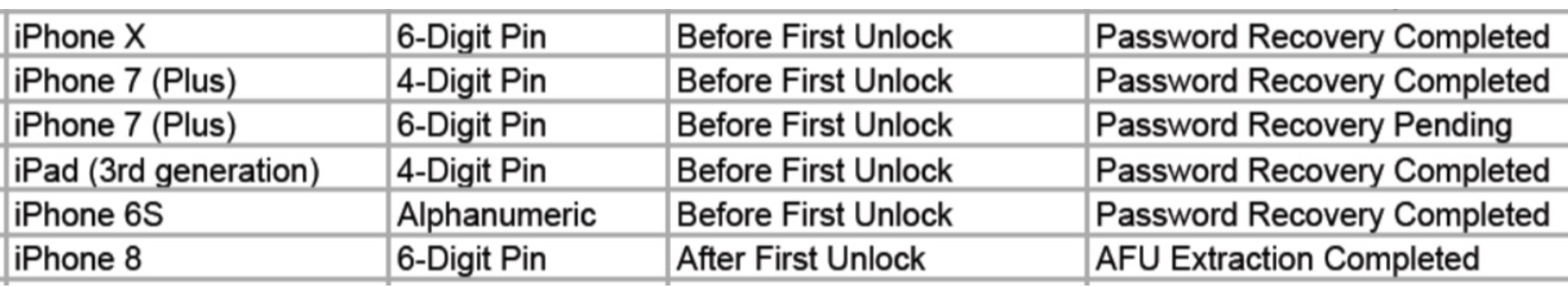

- 3.15 Records from Arizona Law Enforcement Agencies Documenting Passcode Recovery on iOS

- 3.16 GrayKey by Grayshift

- 3.17 List of Data Categories Obtainable via Cloud Forensic Software

- 4.1 PIN Unlock on Android 11

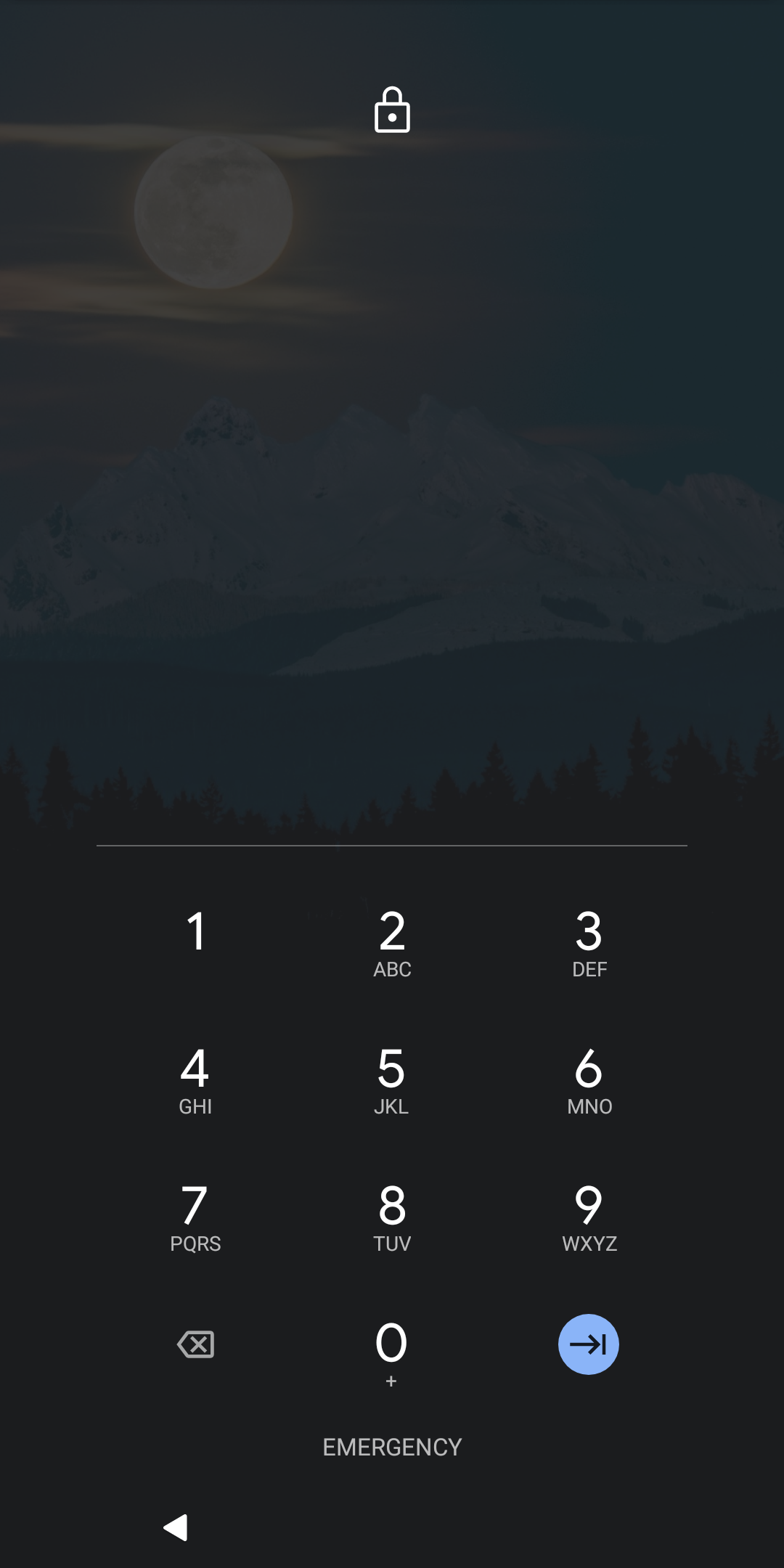

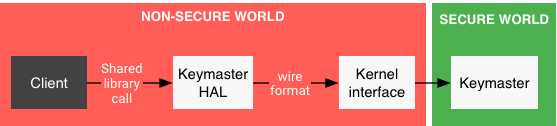

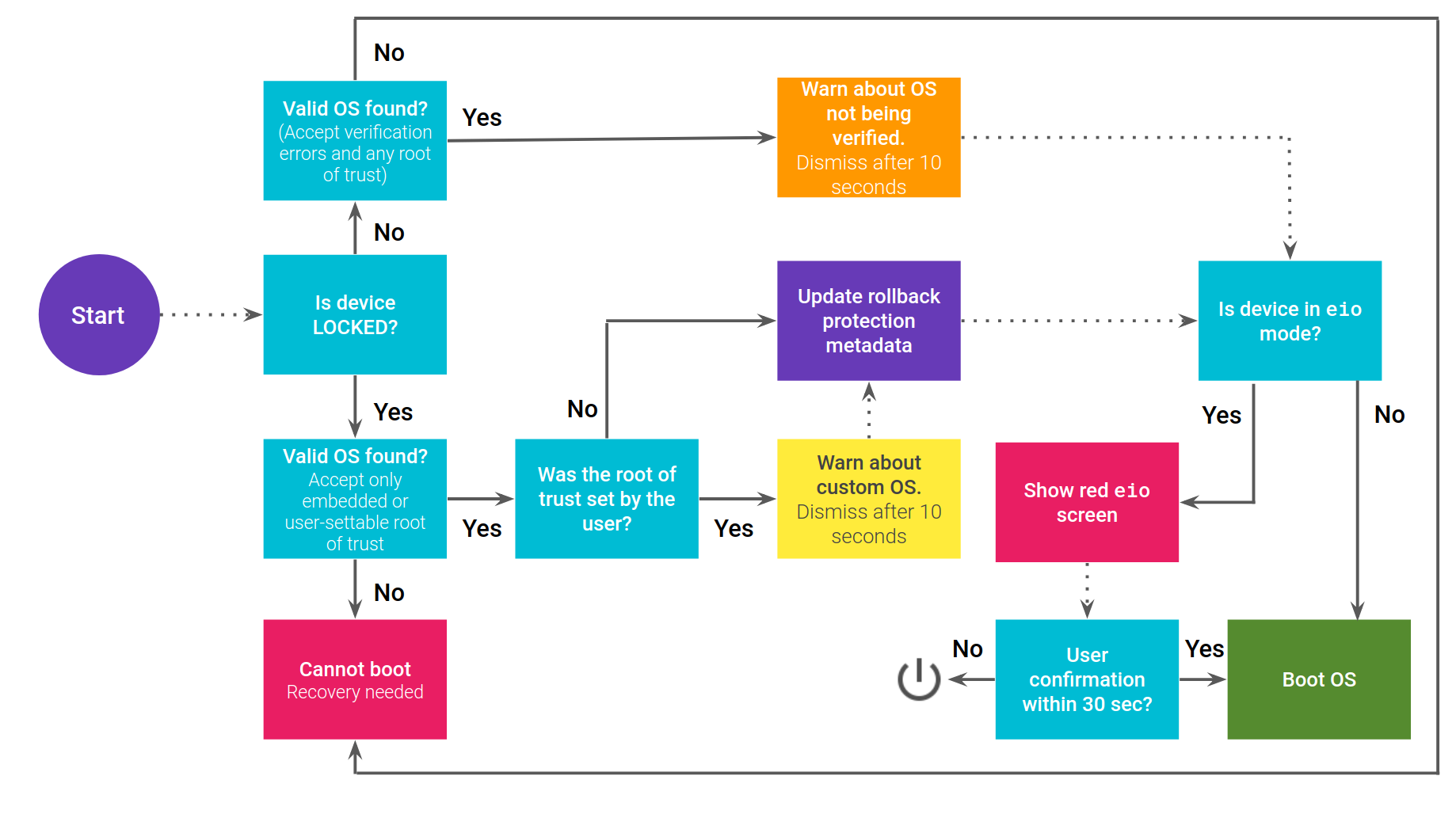

- 4.2 Flow Chart of an Android Keymaster Access Request

- 4.3 Android Boot Process Using Verified Boot

- 4.4 Relationship Between Google Play Services and an Android App

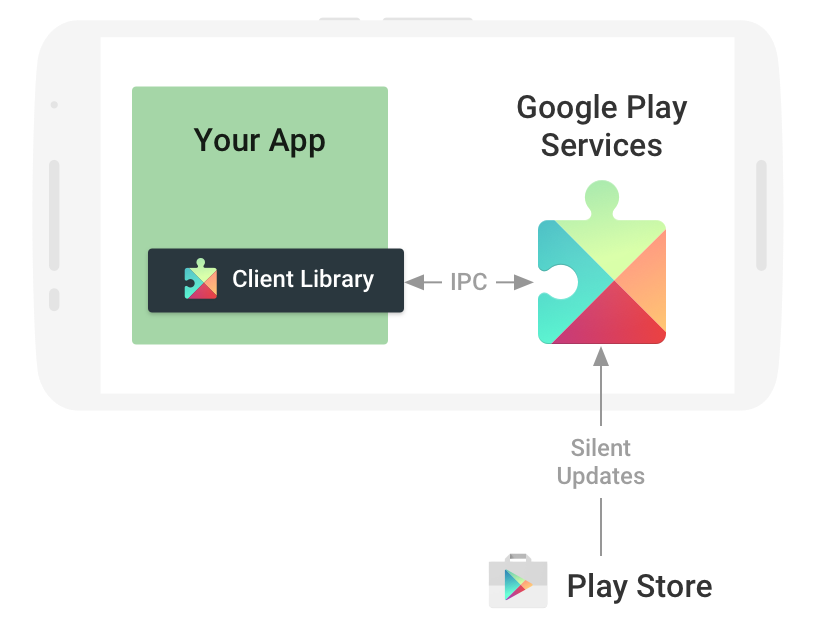

- 4.5 Signature location in an Android APK

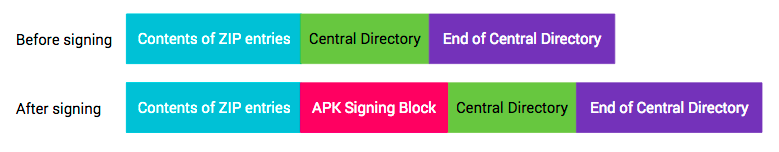

- 4.6 Installing Unknown Apps on Android

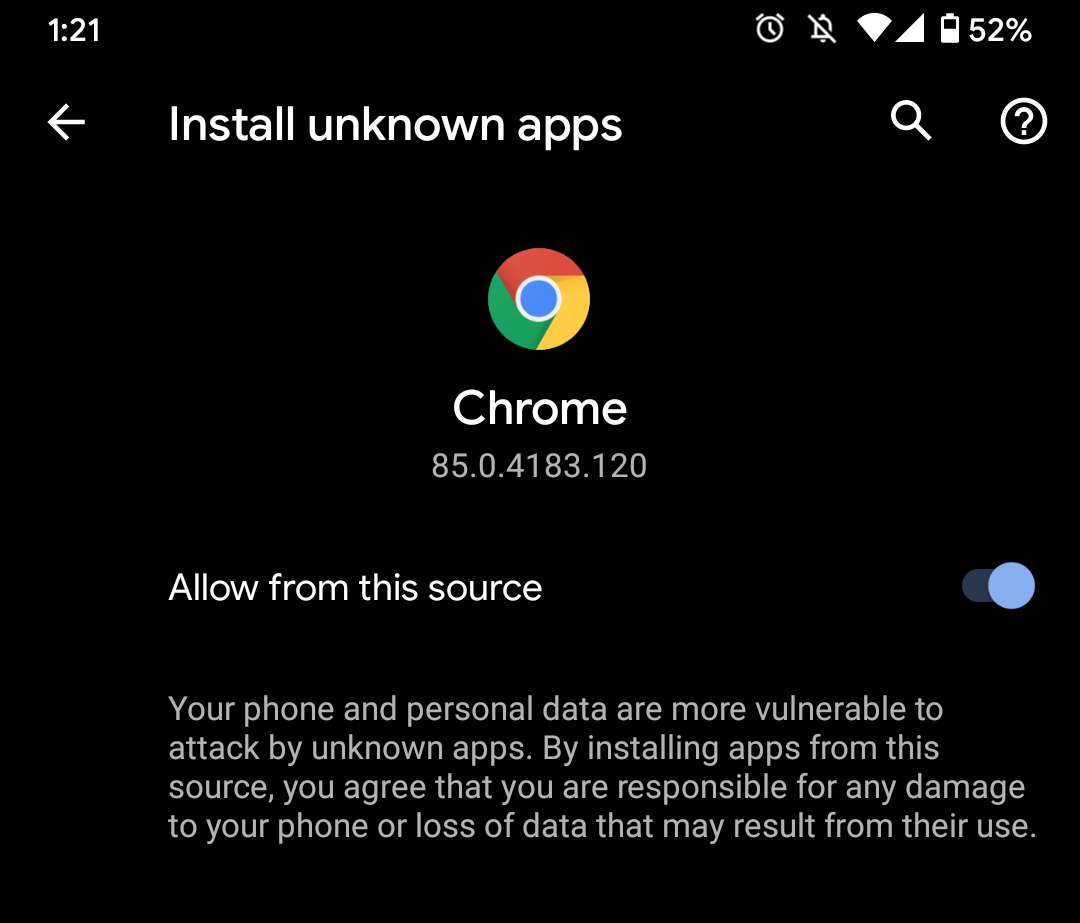

- 4.7 Android Backup Flow Diagram for App Data

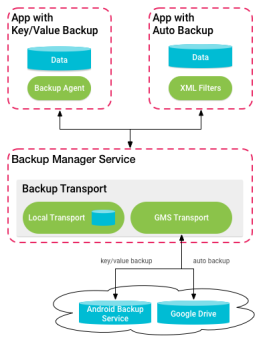

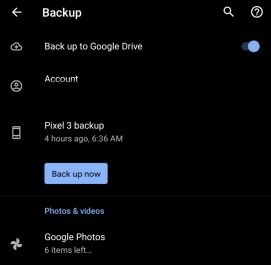

- 4.8 Android Backup Interface

- 4.9 List of Data Categories Included in Google Account Backup

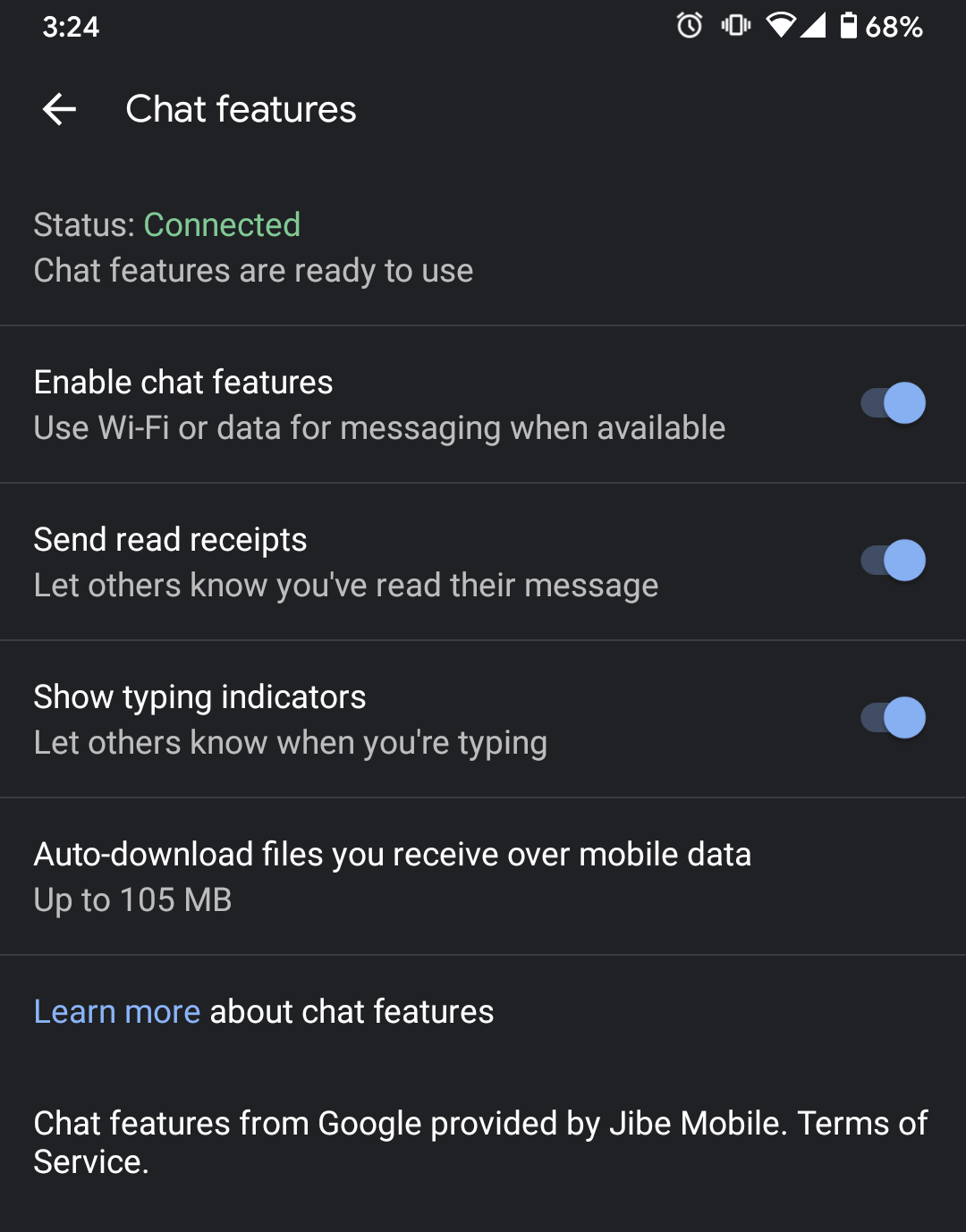

- 4.10 Google Messages RCS Chat Features

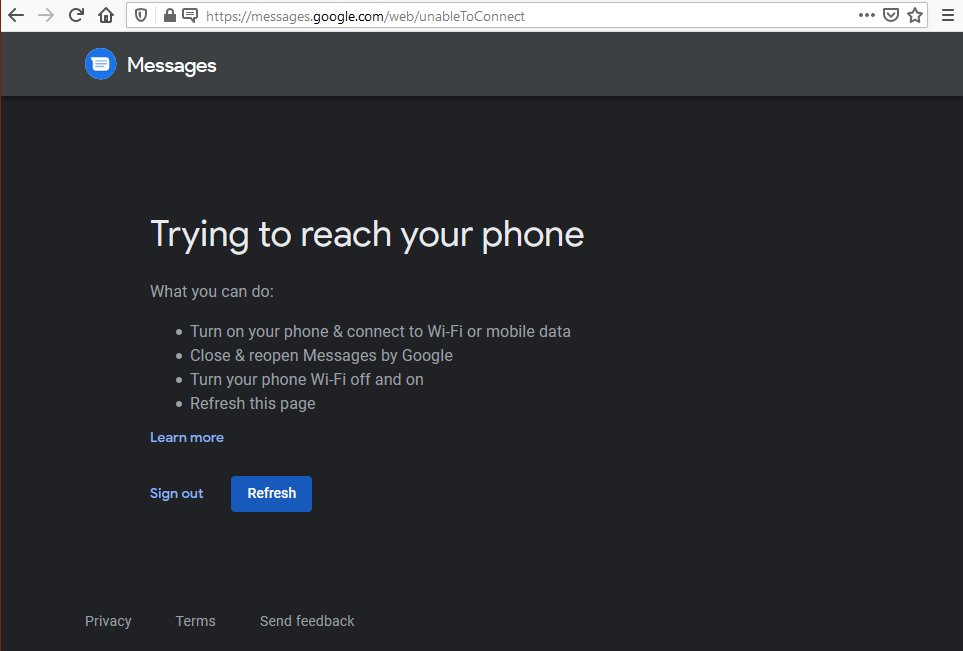

- 4.11 Google Messages Web Client Connection Error

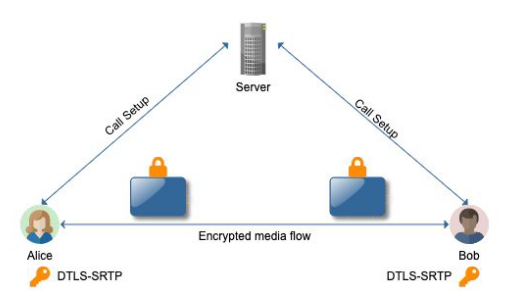

- 4.12 Google Duo Communication Flow Diagram

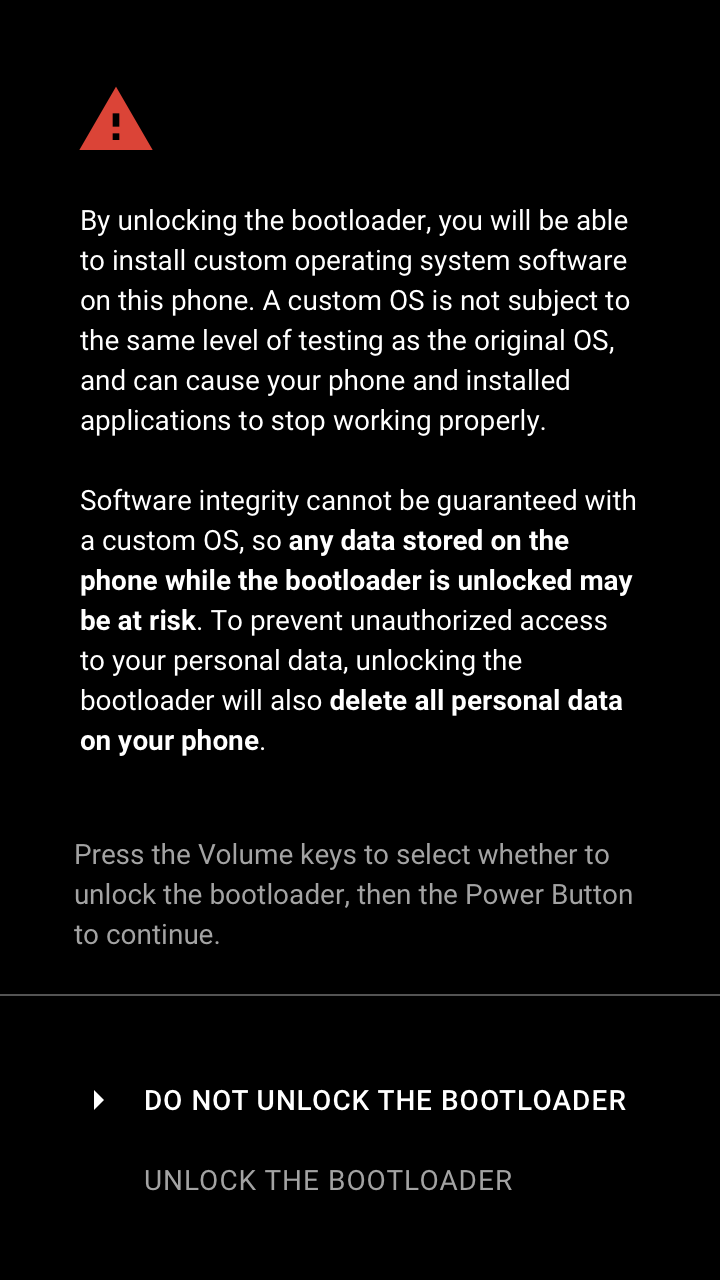

- 4.13 Legitimate Bootloader Unlock on Android

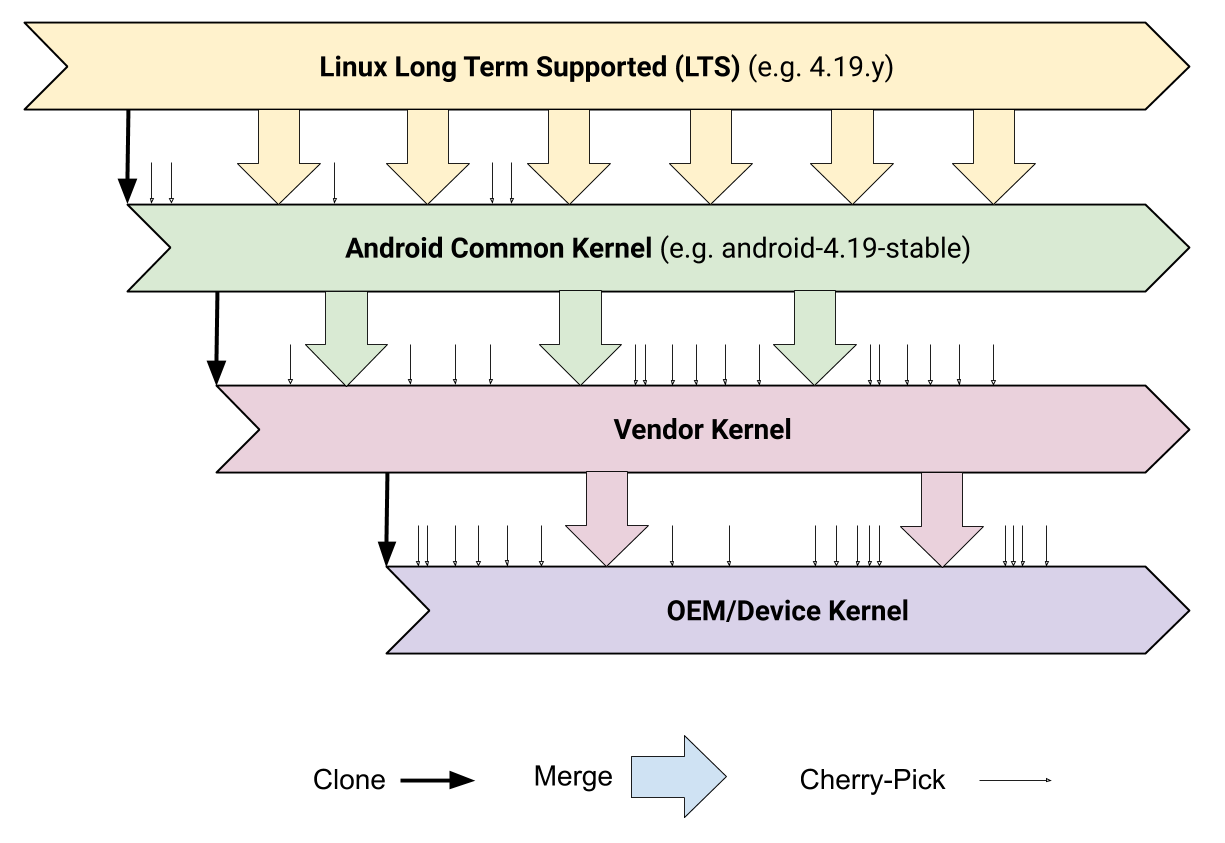

- 4.14 Kernel Hierarchy on Android

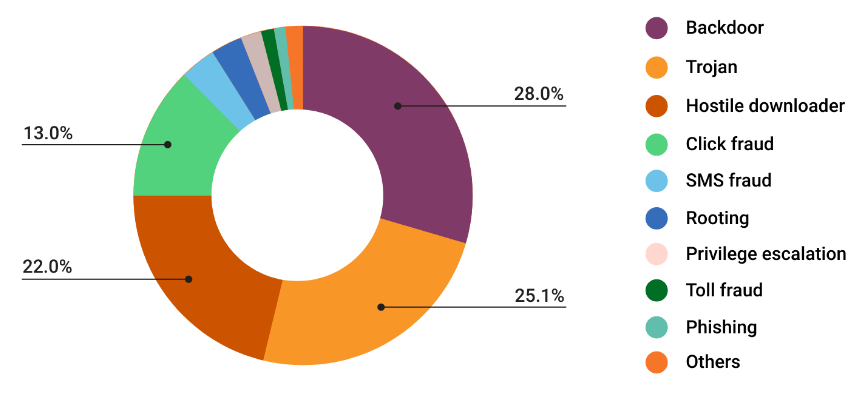

- 4.15 Distribution of PHAs for Apps Installed Outside of the Google Play Store

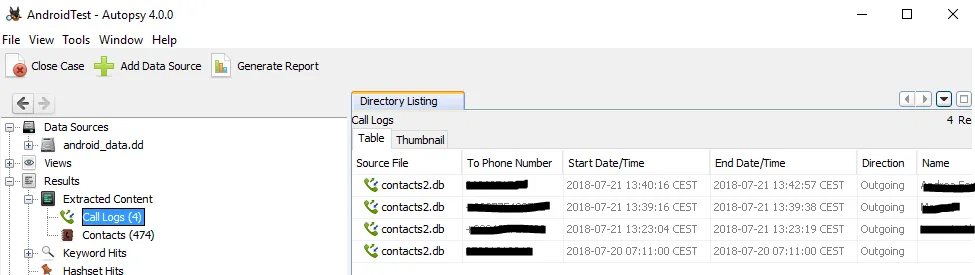

- 4.16 Autopsy Forensic Analysis for an Android Disk Image

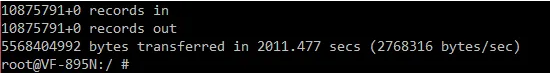

- 4.17 Extracting Data on a Rooted Android Device Using dd

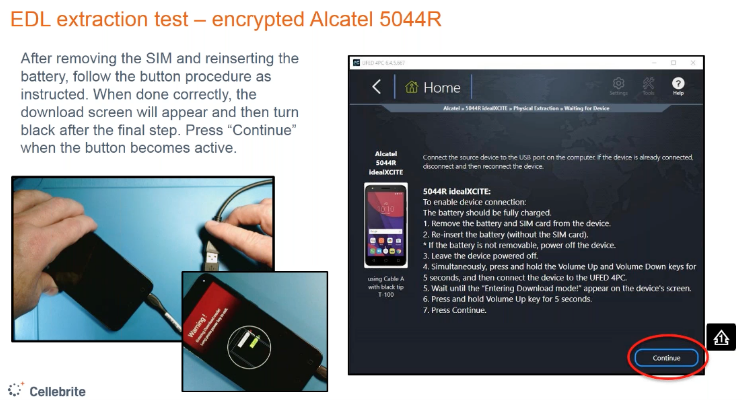

- 4.18 Cellebrite EDL Instructions for an Encrypted Alcatel Android Device

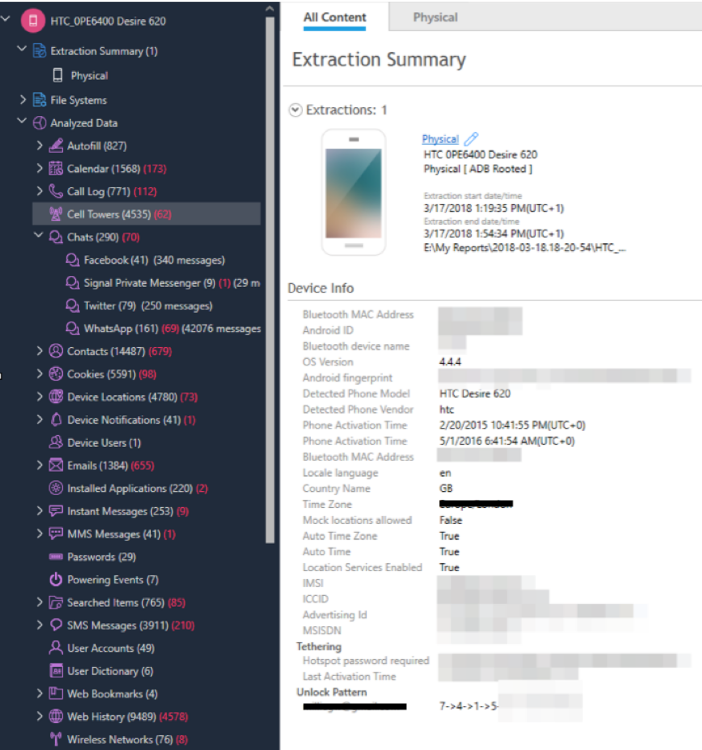

- 4.19 Cellebrite UFED Interface During Extraction of an HTC Desire Android Device

- C.1 Legend for Forensic Access Tables

List of Tables

- 3.1 History of iOS Data Protection

- 3.2 History of iPhone Hardware Security

- 3.3 Passcode Brute-Force Time Estimates

- 3.4 History of Jailbreaks on iPhone

- 3.5 History of iOS Lock Screen Bypasses

- 4.1 History of Android (AOSP) Security Features

- C.1 iOS and Android Forensic Tool Access (2019)

- C.2 iOS and Android Forensic Tool Access (2018)

- C.3 iOS and Android Forensic Tool Access (2017)

- C.4 iOS Forensic Tool Access (2010–2016)

- C.5 Android Forensic Tool Access (2010–2016)

- C.6 History of Forensic Tools (2019)

- C.7 History of Forensic Tools (2018)

- C.8 History of Forensic Tools (2017)

- C.9 History of Forensic Tools (2010–2016)

Chapter 1 Introduction

Mobile devices have become a ubiquitous component of modern life. More than 45% of the global population uses a smartphone , while this number exceeds 80% in the United States . This widespread adoption is a double-edged sword: the smartphone vastly improves the amount of information that individuals can carry with them; at the same time, it has created new potential targets for third parties to obtain sensitive data. The portability and ease of access makes smartphones a target for malicious actors and law enforcement alike: to the former, it provides new opportunities for criminality . To the latter it offers new avenues for investigation, monitoring, and surveillance .

Over the past decade, hardware and software manufacturers have acknowledged these concerns, in the process deploying a series of major upgrades to smartphone hardware and operating systems. These include mechanisms designed to improve software security; default use of passcodes and biometric authentication; and the incorporation of strong encryption mechanisms to protect data in motion and at rest. While these improvements have enhanced the ability of smartphones to prevent data theft, they have provoked a backlash from the law enforcement community. This reaction is best exemplified by the FBI’s “Going Dark” initiative , which seeks to increase law enforcement’s access to encrypted data via legislative and policy initiatives. These concerns have also motivated law enforcement agencies, in collaboration with industry partners, to invest in developing and acquiring technical means for bypassing smartphone security features. This dynamic broke into the public consciousness during the 2016 “Apple v. FBI” controversy , in which Apple contested an FBI demand to bypass technical security measures. However, a vigorous debate over these issues continues to this day . Since 2015 and in the US alone, hundreds of thousands of forensic searches of mobile devices have been executed by over 2,000 law enforcement agencies, in all 50 states and the District of Columbia, which have purchased tools implementing such bypass measures .

The tug-of-war between device manufacturers, law enforcement, and third-party vendors has an important consequence for users: at any given moment, it is difficult to know which smartphone security features are operating as intended, and which can be bypassed via technical means. The resulting confusion is made worse by the fact that manufacturers and law enforcement routinely withhold technical details from the public and from each other. What limited information is available may be distributed across many different sources, ranging from obscure technical documents to court filings. Moreover, these documents can sometimes embed important technical information that is only meaningful to an expert in the field. Finally, competing interests between law enforcement and manufacturers may result in compromises that negatively affect user security .

The outcome of these inconsistent protections and increasing law enforcement access is the creation of massive potential for violations of privacy. More than potential, technology is already allowing law enforcement agencies around the world to surveil people . Technological solutions are only part of the path to remediating these issues, and while we leave the policy advocacy and work to experts in those areas, we present these contributions in pursuit of progress on the technical front.

Our contributions

In this work we attempt a full accounting of the current and historical status of smartphone security measures. We focus on several of the most popular device types, and present a complete description of both the available security mechanisms in these devices, as well as a summary of the known public information on the state-of-the-art in bypass techniques for each. Our goal is to provide a single periodically updated guide that serves to detail the public state of data security in modern smartphones.

More concretely, we make the following specific contributions:

-

1.

We provide a technical overview of the key data security features included in modern Apple and Android-based smartphones, operating systems (OSes), and cloud backup systems. We discuss which forms of data are available in these systems, and under what scenarios this data is protected. Finally, to provide context for this description, we also offer a historical timeline detailing known improvements in each feature.

-

2.

We analyze more than a decade of public information on software exploits and DHS forensic reports and investigative documents, with the goal of formulating an understanding of which security features are (and historically have been) bypassed by criminals and law enforcement, and which security features are currently operating as designed.

-

3.

Based on the understanding above, we suggest changes and improvements that could further harden smartphones against unauthorized access.

We enter this analysis with two major goals. The first is an attempt to solve a puzzle: despite substantial technological advances in systems that protect user data, law enforcement agencies appear to be accessing device data with increasing sophistication . This implies that law enforcement, at least, has become adept at bypassing these security mechanisms. A major goal in our analysis is to to understand how this access is being conducted, on the theory that any vulnerabilities used by public law enforcement agencies could also be used by malicious actors.

A second and related goal of this analysis is to help provide context for the current debate about law enforcement access to smartphone encrypted data , by demonstrating which classes of data are already accessible to law enforcement today via known technological bypass techniques. We additionally seek to determine which security technologies are effectively securing user data, and which technologies require improvement.

Platforms examined. Our analysis focuses on the two most popular OS platforms currently in use by smartphone vendors: Apple’s iOS and Google’s Android. We begin by enumerating the key security technologies available in each platform, and then we discuss the development of these technologies over time. Our primary goal in each case is to develop an understanding of the current state of the technological protection measures that protect user data on each platform.

Sources of bypass data. Having described these technological mechanisms, we then focus our analysis on known techniques for bypassing smartphone security measures. Much of this information is well-known to those versed in smartphone technology. However, in order to gain a deeper understanding of this area, we also examined a large corpus of forensic test results published by the U.S. Department of Homeland Security , as well as scouring public court documents for evidence of surprising new techniques. This analysis provides us with a complete picture of which security mechanisms are likely to be bypassed, and the impact of such bypasses. We provide a concise, complete summary of the contents of the DHS forensic tool test results in Appendix C.

Threat Model. In this work we focus on two sources of device data compromise: physical access to a device, e.g. via device seizure or theft, and remote access to data via cloud services. The physical access scenario assumes that the attacker has gained access to the device, and can physically or logically exploit it via the provided interfaces. Since obtaining data is relatively straightforward when the attacker has authorized access to the device, we focus primarily on unauthorized access scenarios in which the attacker does not possess the passcode or login credentials needed to access the device.

By contrast, our cloud access scenario assumes that the attacker has gained access to cloud-stored data. This access may be obtained through credential theft (e.g. spear-phishing attack), social engineering of cloud provider employees, or via investigative requests made to cloud providers by law enforcement authorities. While we note that legitimate investigative procedures differ from criminal access from a legal point of view, we group these attacks together due to the fact that they leverage similar technological capabilities.

1.1 Summary of Key Findings

We now provide a list of our key findings for both Apple iOS and Google Android devices.

1.1.1 Apple iOS

Apple iOS devices (iPhones, iPads) incorporate a number of security features that are intended to limit unauthorized access to user data. These include software restrictions, biometric access control sensors, and widespread use of encryption within the platform. Apple is also noteworthy for three reasons: the company has overall control of both the hardware and operating system software deployed on its devices, the company’s business model closely restricts which software can be installed on the device, and Apple management has, in the past, expressed vocal opposition to making technical changes in their devices that would facilitate law enforcement access .

To determine the level of security currently provided by Apple against sophisticated attackers, we considered the full scope of Apple’s public documentation, as well as published reports from the U.S. Department of Homeland Security (DHS), postings from mobile forensics companies, and other documents in the public record. Our main findings are as follows:

-

Limited benefit of encryption for powered-on devices. Apple advertises the broad use of encryption to protect user data stored on-device . However, we observed that a surprising amount of sensitive data maintained by built-in applications is protected using a weak “available after first unlock” (AFU) protection class, which does not evict decryption keys from memory when the phone is locked. The impact is that the vast majority of sensitive user data from Apple’s built-in applications can be accessed from a phone that is captured and logically exploited while it is in a powered-on (but locked) state. We also found circumstantial evidence from a 2014 update to Apple’s documentation that the company has, in the past, reduced the protection class assurances regarding certain system data, to unknown effect.

Finally, we found circumstantial evidence in both the DHS procedures and investigative documents that law enforcement now routinely exploits the availability of decryption keys to capture large amounts of sensitive data from locked phones. Documents acquired by Upturn, a privacy advocate organization, support these conclusions, documenting law enforcement records of passcode recovery against both powered-off and simply locked iPhones of all generations .

-

Weaknesses of cloud backup and services. Apple’s iCloud service provides cloud-based device backup and real-time synchronization features. By default, this includes photos, email, contacts, calendars, reminders, notes, text messages (iMessage and SMS/MMS), Safari data (bookmarks, search and browsing history), Apple Home data, Game Center data, and cloud storage for installed apps.

We examine the current state of data protection for iCloud, and determine (unsurprisingly) that activation of these features transmits an abundance of user data to Apple’s servers, in a form that can be accessed remotely by criminals who gain unauthorized access to a user’s cloud account, as well as authorized law enforcement agencies with subpoena power. More surprisingly, we identify several counter-intuitive features of iCloud that increase the vulnerability of this system. As one example, Apple’s “Messages in iCloud” feature advertises the use of an Apple-inaccessible “end-to-end” encrypted container for synchronizing messages across devices . However, activation of iCloud Backup in tandem causes the decryption key for this container to be uploaded to Apple’s servers in a form that Apple (and potential attackers, or law enforcement) can access . Similarly, we observe that Apple’s iCloud Backup design results in the transmission of device-specific file encryption keys to Apple. Since these keys are the same keys used to encrypt data on the device, this transmission may pose a risk in the event that a device is subsequently physically compromised.

More generally, we find that the documentation and user interface of these backup and synchronization features are confusing, and may lead to users unintentionally transmitting certain classes of data to Apple’s servers.

-

Evidence of past hardware (SEP) compromise. iOS devices place strict limits on passcode guessing attacks through the assistance of a dedicated processor known as the Secure Enclave processor (SEP). We examined the public investigative record to review evidence that strongly indicates that as of 2018, passcode guessing attacks were feasible on SEP-enabled iPhones using a tool called GrayKey. To our knowledge, this most likely indicates that a software bypass of the SEP was available in-the-wild during this timeframe. We also reviewed more recent public evidence, and were not able to find dispositive evidence that this exploit is still in use for more recent phones (or whether exploits still exist for older iPhones). Given how critical the SEP is to the ongoing security of the iPhone product line, we flag this uncertainty as a serious risk to consumers.

-

Limitations of “end-to-end encrypted” cloud services. Several Apple iCloud services advertise “end-to-end” encryption in which only the user (with knowledge of a password or passcode) can access cloud-stored data. These services are optionally provided in Apple’s CloudKit containers and via the iCloud Keychain backup service. Implementation of this feature is accomplished via the use of dedicated Hardware Security Modules (HSMs) provisioned at Apple’s data centers. These devices store encryption keys in a form that can only be accessed by a user, and are programmed by Apple such that cloud service operators cannot transfer information out of an HSM without user permission .

As noted above, our finding is that the end-to-end confidentiality of some encrypted services is undermined when used in tandem with the iCloud backup service. More critically, we observe that Apple’s documentation and user settings blur the distinction between “encrypted” (such that Apple has access) and “end-to-end encrypted” in a manner that makes it difficult to understand which data is available to Apple. Finally, we observe a fundamental weakness in the system: Apple can easily cause user data to be re-provisioned to a new (and possibly compromised) HSM simply by presenting a single dialog on a user’s phone. We discuss techniques for mitigating this vulnerability.

Based on these findings, our overall conclusion is that data for iOS devices is highly available to both sophisticated criminals and law enforcement actors with either cloud or physical access. This is due to a combination of the weak protections offered by current Apple iCloud services, and weak defaults used for encrypting sensitive user data on-device. The impact of these choices is that Apple’s data protection is fragile: once certain software or cloud authentication features are breached, attackers can access the vast majority of sensitive user data on device. Later in this work we propose improvements aimed at improving the resilience of Apple’s security measures.

1.1.2 Google Android & Android Phones

Google’s Android operating system, and many third-party phones that use Android, incorporates a number of security features that are analogous to those provided by Apple devices. Unlike Apple, Google does not fully control the hardware and software stack on all Android-compatible smartphones: some Google Android phones are manufactured entirely by Google, while other devices are manufactured by third parties. Moreover, device manufacturers routinely modify the Android operating system prior to deployment.

This fact makes a complete analysis of the Android smartphone ecosystem more challenging. In this work, we choose to focus on a number of high-profile phones such as Google Pixel devices and recent-model Samsung Galaxy phones, mainly because these devices are either representative devices designed by Google to fully encapsulate the capabilities of the Android OS, or best-selling Android phones, with large numbers of active devices worldwide. We additionally focus primarily on recent versions of Android (Android 10 and 11, as of this writing). We note, however, that the Android ecosystem is highly fragmented, and contains large numbers of older-model phones that no longer receive OS software updates, a diversity of manufacturers, and a subclass of phones which are built using inexpensive hardware that lacks advanced security capabilities. Our findings in this analysis are therefore necessarily incomplete, and should be viewed as an optimistic “best case.”

To determine the level of security currently provided by these Android devices against sophisticated attackers, we considered the full scope of Google’s public documentation, as well as published reports from the U.S. Department of Homeland Security (DHS), postings from mobile forensics companies, and other documents in the public record. Our main findings are as follows:

-

Limited benefit of encryption for powered-on devices. Like Apple iOS, Google Android provides encryption for files and data stored on disk. However, Android’s encryption mechanisms provide fewer gradations of protection. In particular, Android provides no equivalent of Apple’s Complete Protection (CP) encryption class, which evicts decryption keys from memory shortly after the phone is locked. As a consequence, Android decryption keys remain in memory at all times after “first unlock,” and user data is potentially vulnerable to forensic capture.

-

De-prioritization of end-to-end encrypted backup. Android incorporates an end-to-end encrypted backup service based on physical hardware devices stored on Google’s datacenters. The design of this system ensures that recovery of backups can only occur if initiated by a user who knows the backup passcode, an on-device key protected by the user’s PIN or other authentication factor. Unfortunately, the end-to-end encrypted backup service must be opted-in to by app developers, and is paralleled by the opt-out Android Auto-Backup, which simply synchronizes app data to Google Drive, encrypted with keys held by Google.

-

Large attack surface. Android is the composition of systems developed by various organizations and companies. The Android kernel has Linux at its core, but also contains chip vendor- and device manufacturer-specific modification. Apps, along with support libraries, integrate with system components and provide their own services to the rest of the device. Because the development of these components is not centralized, cohesively integrating security for all of Android would require significant coordination, and in many cases such efforts are lacking or nonexistent.

-

Limited use of end-to-end encryption. End-to-end encryption for messages in Android is only provided by default in third-party messaging applications. Native Android applications do not provide end-to-end encryption: the only exception being Google Duo, which provides end-to-end encrypted video calls. The current lack of default end-to-end encryption for messages allows the service provider (for example, Google) to view messages and logs, potentially putting user data at risk from hacking, unwanted targeted advertising, subpoena, and surveillance systems.

-

Availability of data in services. Android has deep integration with Google services, such as Drive, Gmail, and Photos. Android phones that utilize these services (the large majority of them ) send data to Google, which stores the data under keys it controls - effectively an extension of the lack of end-to-end encryption beyond just messaging services. These services accumulate rich sets of information on users that can be exfiltrated either by knowledgeable criminals (via system compromise) or by law enforcement (via subpoena power).

Chapter 2 Technical Background

2.1 Data Security Technologies for Mobile Devices

Modern smartphones generate and store immense amounts of sensitive personal information. This data comes in many forms, including photographs, text messages, emails, location data, health information, biometric templates, web browsing history, social media records, passwords, and other documents. Access control for this data is maintained via several essential technologies, which we describe below.

-

Software security and isolation. Modern smartphone operating systems are designed to enforce access control for users and application software. This includes restricting input/output access to the device, as well as ensuring that malicious applications cannot access data to which they are not entitled. Bypassing these restrictions to run arbitrary code requires making fundamental changes to the operating system, either in memory or on disk, a technique that is sometimes called “jailbreaking” on iOS or “rooting” on Android.

-

Passcodes and biometric access. Access to an Apple or Android smartphone’s user interface is, in a default installation, gated by a user-selected passcode of arbitrary strength. Many devices also deploy biometric sensors based on fingerprint or face-recognition as an alternative means to unlock the device.

-

Disk and file encryption. Smartphone operating systems embed data encryption at either the file or disk volume level to protect access to files. This enforces access control to data even in cases where an attacker has bypassed the software mechanisms controlling access to the device. Encryption mechanisms typically derive encryption keys as a function of the user-selected passcode and device-embedded secrets, which is designed to ensure that access to the device requires both user consent and physical control of the hardware.

-

Secure device hardware. Increasingly, smartphone manufacturers have begun to deploy secure co-processors and virtualized equivalents in order to harden devices against both software attacks and physical attacks on device hardware. These devices are designed to strengthen the encryption mechanisms, and to guard critical data such as biometric templates.

-

Secure backup and cloud systems. Most smartphone operating systems offer cloud-based data backup, as well as real-time cloud services for sharing information with other devices. Historically, access to cloud backups has been gated by access controls that are solely under the discretion of the cloud service provider,such as password authentication, making this a fruitful target for both attackers and law enforcement to gain access to data. More recently, providers have begun to deploy provider-inaccessible encrypted backup systems that enforce access controls that require user-selected passcodes, with security of the data enforced by trusted hardware at the providers’ premises.

2.2 Threat Model

In order to discuss the vulnerability of mobile devices it is pertinent to consider the threat models which underpin our analysis. In fact, in this case the threats of the traditional remote network adversary are relatively well-mitigated. It is with a particular additional capability that our threat actors, namely law enforcement forensic investigators and criminal hackers, are able to bypass the existing mitigations: protracted physical access to the mobile device. This access facilitates data extraction in that devices can be kept charging, and mitigations such as disabling physical data ports or remote lock or wipe can be evaded.

We consider deep physical analysis of hardware (particularly de-soldering and “de-capping” the silicon, often done with nitric acid to gain direct access to underlying physical logic implementations) out of scope, as they seem to be prohibitively expensive and risk destroying device hardware or invalidating evidence; we see no clear evidence of this occurring at scale even in federal law enforcement offices. However, we do see evidence of some physical analysis in the form of academic and commercial research, to the extent of interposing the device’s connections to storage or power.

In some cases, law enforcement receive consent from the targets of investigation to access mobile devices. This consent is likely accompanied with passcodes, PINs, and/or passwords. A database of recent warrants against iOS devices shows that not only do law enforcement agents sometimes get this consent, they also seek warrants which nullify any later withdrawal of consent and as such can use their position and access to completely compromise the device.

In other cases, law enforcement agencies are able to execute warrants which create a “geofence” or physical region and period of time, such that any devices which are found to have been present therein are subject to search, usually in the form of requesting data from cloud providers (Apple for iOS devices, and Google for Android) . Such geofence warrants have massive reach and potential to violate the privacy of innocent passerby, but their use is a matter of policy and thus not in scope for our analysis.

Largely, the evidence we gather and present demonstrates that this physical access is used by law enforcement to attach commercial forensic tools which perform exploitation and data extraction on mobile devices. This approach can provide both data directly from the device, or cloud access tokens resident in device memory which can be further exploited to gain access to a target’s online accounts and content. These two methods, device and cloud extraction, can provide overlapping but different categories of sensitive personal data, and, together or individually, represent a massive breach of a target’s privacy.

2.3 Sensitive Data on Phones

The U.S. National Institute of Standards and Technology (NIST) maintains a list of data targets for mobile device acquisition forensic software. These are presented in Figure 2.1. The categories of data which forensic software tests attempt to extract provide us with a notion of what data is prioritized by law enforcement, and allow us to focus our examination of user data protection. The importance of these categories is corroborated by over 500 warrants against iPhones recently collected and released in the news , and articles posted by mobile forensics companies and investigators .

While a useful resource, this list does not capture the extent of potential privacy loss due to unauthorized mobile device access, primarily falling short in two ways. First, it does not capture the long-lived nature of some identifiers, nor the potential sensitivity of each item. Second, critically, mobile devices contain information about and from ourselves but also our networks of peers, friends, and family, and so privacy loss propagates accordingly. Further, due to the emerging capabilities of machine learning and data science techniques combined with continuously increasing availability of aggregated data sets, predictions and analysis (whether correct or not) make these potential violations of privacy nearly unbounded.

-

•

Cellular network subscriber information: IMEI, MEID/ESN

-

•

Personal Information Management (PIM) data: address book/contacts, calendar, memos, etc

-

•

Call logs: incoming, outgoing, missed

-

•

Text messages: SMS, MMS (audio, graphic, video)

-

•

Instant messages

-

•

Stand-alone files: audio, documents, graphic, video

-

•

E-mail

-

•

Web activity: history, bookmarks

-

•

GPS and geo-location data

-

•

Social media data: accounts, content

-

•

SIM/UICC data: provider, IMSI, MSISDN, etc

Chapter 3 Apple iOS

Apple devices are ubiquitous in countries around the world. In Q4 of 2019 alone, almost 73 million iPhones and almost 16 million iPads shipped . While Apple devices represent a minority of the global smartphone market share, Apple maintains approximately a 48% share of the smartphone market in the United States , with similar percentages in many western nations. Overall, Apple claims 1.4 billion active devices in the world . Along with increasing usage trends, these factors make iPhones extremely valuable targets for hackers, with bug bounty programs offering up to $2 million USD , for law enforcement agencies executing warrants, and for governments seeking to surveil journalists, activists, or criminals .

Apple invests heavily in restricting the operating system and application software that can run on their hardware . As such, even users with technical expertise are limited in their ability to extend and protect Apple devices with their own modifications, and Apple software development teams represent essentially the sole technical mitigation against novel attempts to access user data without authorization. The high value of Apple software exploits and Apple’s centralized response produces a cat-and-mouse game of exploitation and patching, where users can never be fully assured that their device is not vulnerable. Apple undertakes protecting user devices through numerous and varied mitigation strategies, and while these include both technical and business approaches, the technical will be primarily and thoroughly examined in this work.

3.1 Protection of User Data in iOS

In this section we provide an overview of key elements of Apple’s user data protection strategy that cover the bulk of on-device and cloud interactions supported by iOS devices. This overview is largely based on information published by Apple , and additionally on external (to Apple) research, product analyses, and security tests.

User authentication

Physical interaction is the primary medium of modern smartphones. In order to secure a device against unauthorized physical access, some form of user authentication is needed. iOS devices provide two mechanisms for this: numeric or alphanumeric passcodes and biometric authentication. In early iPhones, Apple suggested a default of four-digit numeric passwords, but now suggests a six-digit numeric passcode. Users may additionally opt for longer alphanumeric passphrases, or (against Apple’s advice ) disable passcode authentication entirely.

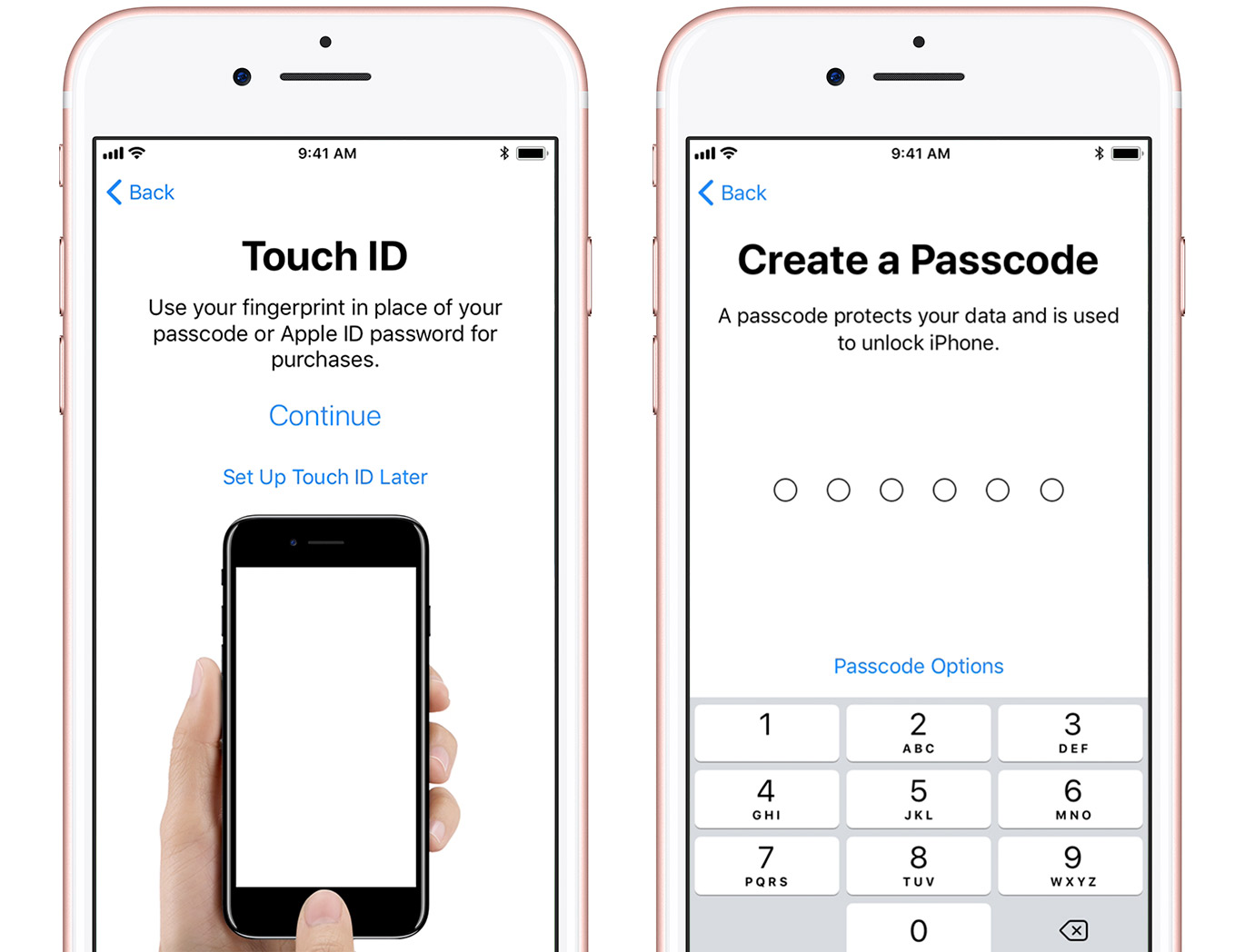

Because there are a relatively small number of 6-digit passcodes, iOS is designed to rate-limit passcode entry attempts in order to prevent attackers from conducting brute-force guessing attacks. In the event of an excessive number of entry attempts, device access may be temporarily locked and user data can be permanently deleted. To improve the user experience while maintaining security, Apple employs biometric access techniques in its devices: these include TouchID, based on a capacitive fingerprint sensor, and a more recent replacement FaceID, which employs face recognition using a depth-sensitive camera . The image in Figure 3.1 demonstrates the TouchID and six-digit passcode setup interfaces on iOS.

Apple also restricts a number of passcodes that are deemed too common, or too “easily guessed.” For a thorough examination of this list and its effects on iOS, refer to recent works by Markert et al. .

Code signing

iOS tightly restricts the executable code that can be run on the platform. This is enforced using digital signatures . The mechanics of iOS require that only software written by Apple or by an approved developer can be executed on the device.

Trusted boot. Apple implements signatures for the software which initializes the operating system, and the operating system itself, in order to verify its integrity . These signature checks are embedded in the low-level firmware called Boot ROM. The primary purpose of this security measure, according to Apple, is to ensure that long-term encryption keys are protected and that no malicious software runs on the device.

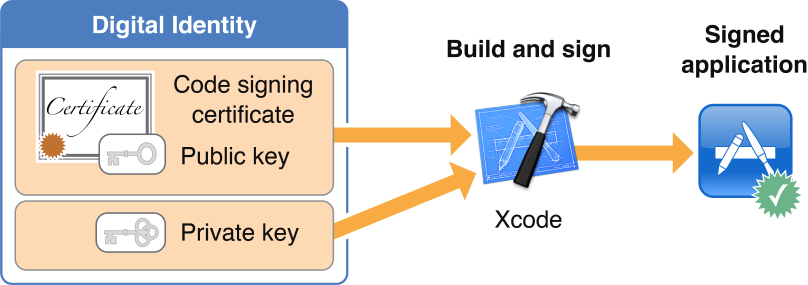

App signing. Apple authorizes developers to distribute code using a combination of Apple-controlled signatures and a public-key certificate infrastructure that allows the system to scale . Organizations may also apply and pay for enterprise signing certificates that allow them to authenticate software for specially-authorized iOS devices (those that have installed the organization’s certificate ). This is intended to enable companies to deliver proprietary internal apps to employees, although the mechanism has been subverted many times for jailbreaking , for advertising or copyright fraud , and for device compromise . The image in Figure 3.2 displays Apple documentation of the code signing process for developers.

Sandboxing and code review

iOS enforces restrictions that limit each application’s access to user data and the operating system APIs. This mechanism is designed to protect against incursions by malicious third-party applications, and to limit the damage caused by exploitation of a non-malicious application. To implement this, iOS runs each application in a “sandbox” that restricts its access to the device filesystem and memory space, and carries a signed manifest that details allowed access to system services such as location services. For applications distributed via its App Store – which is the only software installation method allowed on a device with default settings – Apple additionally performs automated and manual code review of third-party applications . Despite these protections, malicious or privacy-violating applications have passed review .

iOS 14 includes additional privacy transparency and control features such as listing privacy-relevant permissions in the App Store, allowing finer-grained access to photos, an OS-supported recording indicator, Wi-Fi network identifier obfuscation, and optional approximate location services . However, most of these features are focused on the privacy of users from app developers rather than from the phone itself, the relevant adversary under the threat model of forensics.

Encryption

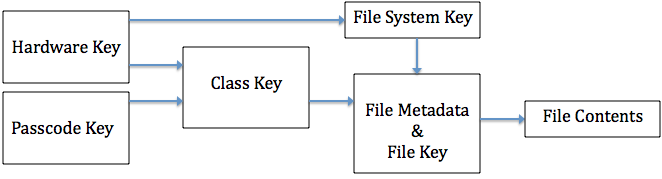

While software protections provide a degree of security for system and application data, these security mechanisms can be bypassed by exploiting logic vulnerabilities in software or flaws in device hardware. Apple attempts to address this concern through the use of data encryption. This approach provides Apple devices with two major benefits: first, it ensures that Apple device storage can be rapidly erased, simply by destroying a single encryption key carried within the device. Second, encryption allows Apple to provide strong file-based access control to files and data objects on the device, even in the event that an attacker bypasses security controls within the operating system. The image in Figure 3.3 depicts the key hierarchy used in iOS Data Protection.

iOS employs industry-standard cryptography including AES , ECDH over Curve25519 , and various NIST-approved standard constructions to encrypt files in the filesystem . To ensure that data access is controlled by the user and is tied to a specific device, Apple encrypts all files using a key that is derived from a combination of the user-selected passcode and a unique secret cryptographic key (called the UID key) that is stored within device hardware. In order to recover the necessary decryption keys following a reboot the user must enter the device passcode. When the device is locked but has been unlocked since last boot, biometrics suffice to unlock these keys. To prevent the user from bypassing the encryption by guessing a large number of passcodes, the system enforces guessing limits in two ways: by using a computationally-intensive password-based key derivation function that requires 80ms to derive a key on the device hardware , and by enforcing guess limits and increasing time intervals using trusted hardware (see further below) and software .

Data Protection Classes. Apple provides interfaces to enable encryption in both first-party and third-party software, using the iOS Data Protection API . Within this package, Apple specifies several encryption “protection classes” that application developers can select when creating new data files and objects. These classes allow developers to specify the security properties of each piece of encrypted data, including whether the keys corresponding to that data will be evicted from memory after the phone is locked (“Complete Protection” or CP) or shut down (“After First Unlock” or AFU).

We present a complete list of Data Protection classes in Figure 3.4. As we will discuss below the selection of protection class makes an enormous practical difference in the security afforded by Apple’s file encryption. Since in practice, users reboot their phones only rarely, many phones are routinely carried in a locked-but-authenticated state (AFU). This means that for protection classes other than CP, decryption keys remain available in the device’s memory. Analysis of forensic tools shows that to an attacker who obtains a phone in this state, encryption provides only a modest additional protection over the software security and authentication measures described above.

-

Complete Protection (CP): Encryption keys for this data are evicted shortly after device lock (10 seconds).

-

Protected Unless Open (PUO): Using public-key encryption, PUO allows data files to be created and encrypted while the device is locked, but only decrypted when the device is unlocked, by keeping an ephemeral public key in memory but evicting the private key at device lock. Once the file has been created and closed, data in this class has properties similar to Complete Protection.

-

Protected Until First User Authentication (a.k.a. After First Unlock) (AFU): Encryption keys are decrypted into memory when the user first enters the device passcode, and remain in memory even if the device is locked.

-

No Protection (NP): Encryption keys are encrypted by the hardware UID keys only, not the user passcode, when the device is off. These keys are always available in memory when the device is on.

A natural question arises: why not simply apply CP to all classes of data? This would seriously hamper unauthorized attempts to access user data. However, the answer appears to lie in user experience. Examining the data in Table 3.1 it seems likely that data which is useful to apps which run in the background, including while the device is locked, is kept in the AFU state in order to enable continuous services such as VPNs, email synchronization, contacts, and iMessage. For example, if user’s contacts were protected using CP, then a locked phone would be unable to display a name associated with a phone number for an incoming text message, and likely would just display the phone number itself. This would severely impact the feature of iOS to preview sender and message content in lock screen notifications.

Keychain

iOS provides the system and applications with a secure key-value store API called the Keychain for storing sensitive secrets such as keys and passwords. The Keychain provides encrypted storage and permissioned access to these secret items via a public API which third-party app developers can build around. This Keychain data is encrypted using keys that are in turn protected by device hardware keys and the user passcode, and can optionally placed into protection classes that mirror the protection classes in Figure 3.4. In addition to these protection classes, the Keychain also introduces an optional characteristic called Non-Migratory (NM), which ensures that any protected data can only be decrypted on the same device that it was encrypted under. This mechanism is enforced via the internal hardware UID key, which cannot be transported off of the device.

Apple selects the Data Protection classes used by built-in applications such as iMessage and Photos, while third-party developers may choose these classes when developing applications. If they do not explicitly select a different protection class, the default class used is Protected Until First User Authentication, or AFU. Their documentation claims that, at least, the following applications’ data falls under some degree of Data Protection: Messages, Mail, Calendar, Contacts, Photos, and Health, in addition to all third-party apps in iOS 7 and later. Refer to Table 3.1 for more details on Data Protection, and to Figure 3.12 for details on data which is necessarily AFU or less protected due to its availability via forensic tools.

Backups

iOS devices can be backed up either to iCloud or to a personal computer. When backing up to a personal computer, users may set an optional backup password. This password is used to derive an encryption key that, in turn, protects a structure called the “Keybag.” The Keybag contains a bundle of further encryption keys that encrypt the backup in its entirety. A limitation of this mechanism is that the user-specified backup password must be extremely strong: since this password is not entangled with device hardware secrets, stored backups may be subject to sophisticated offline dictionary attacks that can guess weak passwords, however iOS uses 10 million iterations of PBKDF2 to significantly inhibit such password cracking . iOS devices may also be configured to backup to Apple iCloud servers. In this instance, data is encrypted asymmetrically using Curve25519 (so that backups can be performed when the device is locked without exposing secret keys) , and those keys are encrypted with “iCloud keys” known to Apple to create the iCloud Backup Keybag. This means that Apple itself or a malicious actor who can guess or reset user credentials can access the contents of the backup. For both types of backups, the Keychain is additionally encrypted with a key derived from the hardware UID key to prevent restoring it to a new device .

Aside from Mail, which is not encrypted at rest on the server , all other backup data is stored encrypted with keys that Apple has access to. This implies that such data can be accessed through an unauthorized compromise of Apple’s network, a stolen credential attack, or compelled access by authorized government officials. The data classes included in an iCloud backup are listed in Figure 3.5.

-

•

App data

-

•

Apple Watch backups

-

•

Device settings

-

•

Home screen and app organization

-

•

iMessage, SMS, and MMS messages

-

•

Photos and videos

-

•

Purchase history from Apple services

-

•

Ringtones

-

•

Visual Voicemail password

iCloud

In addition to backups, iCloud can be used to store and synchronize various classes of data, primarily for built-in default apps such as Photos and Documents. Third-party apps’ files are also included in iCloud Backups unless the developers specifically opt-out . Apple encrypts data in transit using Transport Layer Security (TLS) as is standard for internet traffic . Data at rest, however, is a more complex story: Mail is stored unencrypted on the server (which Apple claims is an industry standard practice ). The data classes in Figure 3.6 is stored encrypted with a 128-bit AES key known to Apple, and the data classes in Figure 3.7 is stored encrypted with a key derived from the user passcode and is thus protected from even Apple. There are caveats to these lists, including that Health data is only end-to-end encrypted if two-factor authentication is enabled for iCloud , and that Messages in iCloud, which can be enabled in the iOS settings, uses end-to-end encryption, but the key is also included in iCloud backups and thus can be accessed by Apple if iCloud backup is enabled .

The user experience of controlling access to iCloud data embeds relatively unpredictable aspects: for example, disabling iCloud for the default Calendar app prevents the sending of calendar invites via email on iOS 13. A variety of exceptions and special cases, like the iMessage example above, combined with unpredictable side-effects on user experience, makes it more difficult for users to secure a device by adjusting user-facing settings.

-

•

Safari History & Bookmarks

-

•

Calendars

-

•

Contacts

-

•

Find My

-

•

iCloud Drive

-

•

Messages in iCloud

-

•

Notes

-

•

Photos

-

•

Reminders

-

•

Siri Shortcuts

-

•

Voice Memos

-

•

Wallet Passes

-

•

Apple Card transactions

-

•

Home data

-

•

Health data

-

•

iCloud Keychain

-

•

Maps data

-

•

Memoji

-

•

Payment information

-

•

Quicktype Keyboard learned vocabulary

-

•

Safari History and iCloud Tabs

-

•

Screen Time

-

•

Siri information

-

•

Wi-Fi passwords

-

•

W1 and H1 Bluetooth keys

CloudKit

Third-party developers can also integrate with iCloud in iOS applications via CloudKit, an API which allows applications to access cloud storage with configurable properties that allow data to be shared in real-time across multiple devices . CloudKit data is encrypted into one or more “containers” under a key hierarchy similar to Data Protection. The top-level key in this hierarchy is the “CloudKit Service Key.” This key is stored in the synchronized user Keychain, inaccessible to Apple, and is rotated any time the user disables iCloud Backup .

iCloud Keychain

iCloud Keychain extends iCloud functionality to provide two services for Apple devices: Keychain synchronization across devices, and Keychain recovery in case of device loss or failure .

-

1.

Keychain syncing enables trusted devices (see below) to share Keychain data with one another using asymmetric encryption . The data available for Keychain syncing includes Safari user data (usernames, passwords, and credit card numbers), Wi-Fi passwords, and HomeKit encryption keys. Third-party applications may opt-in to have their Keychain data synchronized.

-

2.

Keychain recovery allows a user to escrow an encrypted copy of their Keychain with Apple . The Keychain is encrypted with a “strong passcode” known as the iCloud Security code, discussed below.

Apple documentation defines a “trusted device” as:

“an iPhone, iPad, or iPod touch with iOS 9 and later, or Mac with OS X El Capitan and later that you’ve already signed in to using two-factor authentication. It’s a device we know is yours and that can be used to verify your identity by displaying a verification code from Apple when you sign in on a different device or browser.”

In contrast with standard iCloud and iCloud backups, iCloud Keychain provides additional security guarantees for stored data. This is enforced through the use of trusted Hardware Security Modules (HSMs) within Apple’s back-end data centers. This system is designed by Apple to ensure that even Apple itself cannot access the contents of iCloud Keychain backups without access to the iCloud Security Code. This code is generated either from the user’s passcode if Two-Factor Authentication (2FA) is enabled, or chosen by the user (optionally generated on-device) if 2FA is not enabled. When authenticating to the Hardware Security Modules (HSMs) which protect iCloud Keychain recovery, the iCloud Security Code is never transmitted, instead using the Secure Remote Password (SRP) protocol . After the HSM cluster verifies that 10 failed attempts have not occurred, it sends a copy of the encrypted Keychain to the device for decryption. If 10 attempts is exceeded, the record is destroyed and the user must re-enroll in iCloud Keychain to continue using its features. As a final note, the software installed on Apple HSMs can run must be digitally signed; Apple asserts that the signing keys required to further alter the software are physically destroyed after HSM deployment , preventing the company from deliberately modifying these systems to access user data.

Trusted hardware

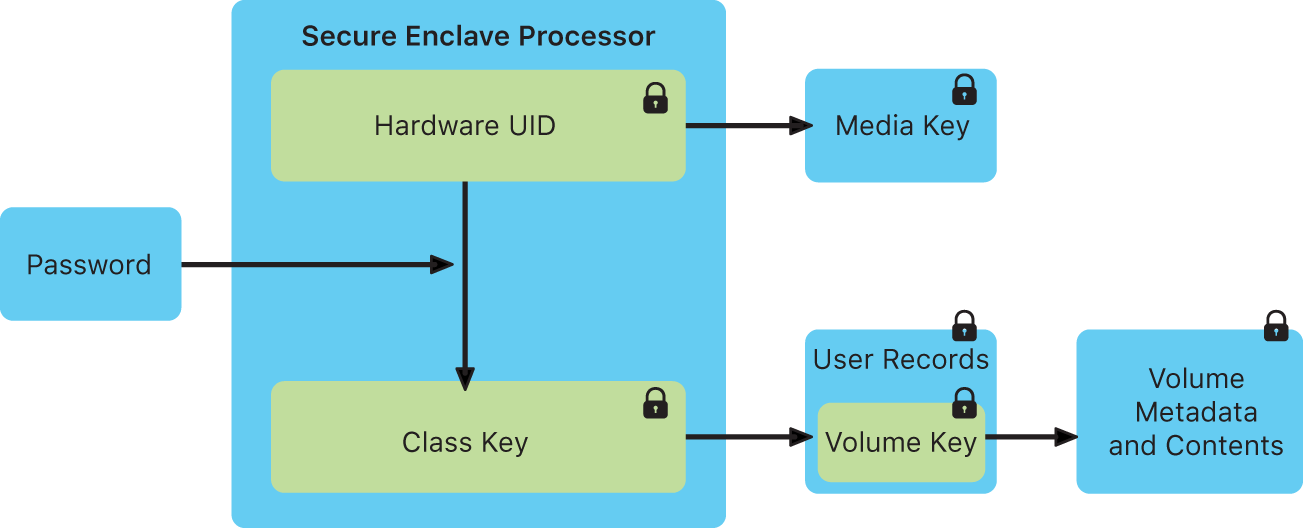

Apple provides multiple dedicated components to support encryption, biometrics and other security functions. Primary among these is the Secure Enclave Processor (SEP), a dedicated co-processor which uses encrypted memory and handles processes related to user authentication, secret key management, encryption, and random number generation. The SEP is intended to enable security against even a compromised iOS kernel by executing cryptographic operations in separate dedicated hardware. The SEP negotiates keys to communicate with TouchID and FaceID subsystems (the touch sensor or the Neural Engine for facial recognition) , provides keys to the Crypto Engine (a cryptography accelerator), and communicates with a “secure storage integrated circuit” for random number generation, anti-replay counters, and tamper resistance . The image in Figure 3.8 documents the encryption keys the SEP derives from the user passcode.

Apple documentation describes FaceID as leveraging the Neural Engine and the SEP: “A portion of the A11 Bionic processor’s neural engine—protected within the Secure Enclave—transforms this data into a mathematical representation and compares that representation to the enrolled facial data.” As worded, this description fails to fully convey the features of hardware which make this possible, and as such we must speculate as to the exact method by which FaceID data is processed and secured. For example, it is possible that the Neural Engine simply provides data directly to the SEP, or via the application processor (AP). It’s also possible that, similar to TouchID, the Neural Engine creates a shared key with the SEP and then passes encrypted data through the AP. Physical inspection of the iPhone X (the first generation with FFaceID) suggests that the Neural Engine and SEP are inside the A11 package , as in the earlier design with the SEP and A7 SoC in iPhone 5s . iPhones 6 and later (among other contemporaneous devices e.g. Apple Watches and some iPads) additionally support Apple Pay and other NFC/Suica communication of secrets via the Secure Element, a wireless-enabled chip which stores encrypted payment card and other data . The image in Figure 3.9 documents the LocalAuthentication framework through which TouchID and FaceID operate.

Restricting attack surface

A locked iOS device has very few methods of data ingress available: background downloads such as sync (email, cloud services, etc), Push notifications, certain network messages via Wi-Fi, Bluetooth, or the cellular network, and the physical Lightning port on the device. Exploits delivered to a locked device must necessarily use one of these avenues. With the introduction of USB Restricted Mode, Apple sought to limit the access of untrusted USB Lightning devices to access or interact with iOS, such as forensic software . By reducing attack surface, iOS complicates or mitigates attacks. This protection mode simply disables USB communication for unknown devices after the iOS device is locked for an hour without USB accessory activity, or upon first use of a USB accessory; known devices are remembered for 30 days . If no accessories are used for three days, the lockout period is reduced from an hour to immediately after device lock . However, this protection is not complete, as discussed in §3.3.

iMessage and FaceTime

Each iPhone ships with two integrated communication packages: iMessage and FaceTime. The iMessage platform is incorporated within the Messages application as an alternative to SMS/MMS, and provides end-to-end encrypted messaging communications with other Apple devices. The FaceTime app provides end-to-end encrypted audio and videoconferencing between Apple devices. iMessage messages are encrypted using a “signcryption” scheme recently analyzed by Bellare and Stepanovs with the Apple Identity Service serving as a trusted authority for user public keys . FaceTime calls are encrypted using a scheme that Apple has not documented, but claims is forward-secure based on the Secure Real-time Transport Protocol (SRTP) . Although these protocols are end-to-end encrypted and authenticated, they rely on the Apple Identity Service to ensure that participants are authentic .

3.2 History of Apple Security Features

The current hardware and software security capabilities of iOS devices are many and varied, ranging from access control to cryptography. These features were incrementally developed over time and delivered in new versions of iOS pre-loaded on or delivered to devices. An overwhelming percentage of iOS users update their devices: in June 2020, Apple found that over 92% of recent iPhones ran iOS 13, and almost all the remainder (7%) ran iOS 12 ; iOS 13 was released 9 months prior. The implication of this is that while most users receive the latest mitigations, users of older devices may receive only partial security against known attacks, especially when using devices which have reached end-of-life (no longer receiving updates from Apple). To make these limitations apparent, in Appendix A we provide a detailed overview of the historical deployment of new security features, as described by published Apple documents . This history is summarized in Tables 3.1 and 3.2.

| iOS | Data Protection | Notes |

|---|---|---|

| 1 (2G) | - | 4-digit passcodes |

| 2 (3G) | - | Option to erase user data after 10 failed passcode attempts introduced |

| 3 (3GS) | DP introduced | Encrypted flash storage when device off |

| 4 (4) | Mail & Attachments: PUO | PUO insecure due to implementation error on iPhone 4 until iOS 7 |

| 5 (4S) | - | AES-GCM replaces CBC in Keychain |

| 6 (5) | iTunes Backup: CP+NM

Location Data: CP Mail Accounts: AFU iMessage keys: NP+NM |

Various other data at AFU or NP+NM class |

| 7 (5S) | Safari Passwords: CP

Authentication tokens: AFU Default and third-party apps: AFU |

SEP and TouchID introduced in iPhone 5S

Third-party apps may opt-in to higher classes |

| 8 (6) | App Documents: CP

Location Data: AFU? |

User passcode mixed into encryption keys

XTS replaces CBC for storage encryption |

| 9 (6S) | Safari Bookmarks: CP | 6-digit passcode default introduced |

| 10 (7) | Clipboard: ?

iCloud private key: NP+NM |

- |

| 11 (8 & X) | - | FaceID introduced in iPhone X

SEP memory adds an “integrity tree” to prevent replay attacks USB Restricted Mode introduced to mitigate exploits delivered over Lightning connector |

| 12 (XS) | - | SEP enforces Data Protection in DFU (Device Firmware Upgrade) and Recovery mode to mitigate bypass via bootloader |

| 13 (11) | - | - |

| SoC | Hardware Changes | Notes |

|---|---|---|

| Samsung ARM-32 CPU (2G) | - | No dedicated hardware security components in the early phones |

| Samsung ARM-32 CPU (3G) | - | - |

| Samsung Cortex SoC (3GS) | - | Flash storage encryption driven by application processor (AP) |

| Apple A4 (4) | - | Shift to Apple-designed SoCs |

| A5 (4S) | Crypto Engine | Dedicated cryptography accelerator documented here, potentially included in earlier generations |

| A6 (5) | - | - |

| A7 (5S) | TouchID and Secure Enclave Processor (SEP) | Major change, including new UID key inside SEP and shared key between SEP and TouchID sensor |

| A8 (6) | - | Significant software changes in this generation which rely on hardware changes of A7 |

| A9 (6S) | Bus between flash and memory “isolated via the Crypto Engine” | Interpreting this documentation implies that hardware changed to physically enforce flash storage encryption |

| A10 Fusion (7) | - | - |

| A11 Bionic (8/X) | FaceID and Neural Engine | Neural Engine somehow integrated with SEP to enable facial recognition secure against malicious AP |

| A12 Bionic (XS) | “Secure Storage Integrated Circuit” | SSIC added to bolster SEP replay protection, RNG, and tamper detection |

| A13 Bionic (11) | - | - |

3.3 Known Data Security Bypass Techniques

As each iteration of Apple device introduces or improves on security features, the commercial exploit/forensics and jailbreaking communities have reacted by developing new techniques to bypass those features. Bypassing iPhone protections is an attractive goal for threat actors and law enforcement agencies alike. Hackers can receive bug bounties amounting to six or seven digits for viable exploits ; rogue governments can buy or develop and use malware to track human rights activists or political opponents ; and law enforcement agencies can create or bolster cases through investigation of phone contents, with forensic software companies signing lucrative contracts to help them do so .

Law enforcement agencies in many jurisdictions may also provide legal requests for data. In pursuing compliance with these laws, Apple provides data to law enforcement when legal requests are made. Figure 3.10 documents data which Apple claims to be able or not able to provide to U.S. law enforcement . This method of access requires legal process, and certain information being provided to Apple, and some requests may be rejected under various circumstances . As such, this method of data collection may be supplemented or even entirely superseded by commercial data forensics methods described in §3.3.2 and §3.3.3.

Jailbreaks and software exploits

The primary means to bypass iOS security features is through the exploitation of software vulnerabilities in apps and iOS software. Jailbreaks are a class of such exploits central to forensic analysis of iOS. The defining feature of a jailbreak is to enable running unsigned code, such as a modified iOS kernel or apps that have not been approved by Apple or a trusted developer . Other software exploits which pertain to user data privacy (speculatively) include SEP exploits, exploits which may enable passcode brute-force guessing and lock-screen bypasses. All of these exploits are discussed in §3.3.1.

Local device forensic data extraction

Once a device has been made accessible using a software exploit, actors may require technological assistance to perform forensic analysis of the resulting data. This requires tools that extract data from a device and render the results in a human-readable form . This latter niche has been actively filled by a variety of private companies, whose software is tested and used by the U.S. Department of Homeland Security (DHS) and local law enforcement, with public reporting on its effectiveness (refer to Figure 2.1 for the data targeted by mobile forensic software). DHS evaluations of forensic software tools reveal that, at least in laboratory settings, significant portions of the targeted data is successfully extracted from supported devices. Refer to §3.3.2 for discussion of the use of such tools, and to §3.4 for further examination of the history of forensic software.

Cloud forensic data extraction

Cloud integrations such as Apple iCloud enable valuable features such as backup and sync. They also create a data-rich pathway for information extraction, and represent a target for search warrants themselves. The various ways these services can be leveraged by hackers or law enforcement forensics are discussed in §3.3.3.

Data Apple makes available to law enforcement:

-

•

Product registration information

-

•

Customer service records

-

•

iTunes subscriber information

-

•

iTunes connection logs (IP address)

-

•

iTunes purchase and download history

-

•

Apple online store purchase information

-

•

iCloud subscriber information

-

•

iCloud email logs (metadata)

-

•

iCloud email content

-

•

iCloud photos

-

•

iCloud Drive documents

-

•

Contacts

-

•

Calendars

-

•

Bookmarks

-

•

Safari browsing history

-

•

Maps search history

-

•

Messages (SMS/MMS)

-

•

Backups (app data, settings, and other data)

-

•

Find My connections and transactions

-

•

Hardware address (MAC) for a given iPhone

-

•

FaceTime call invitations

-

•

iMessage capability query logs

Data Apple claims is unavailable:

-

•

Find My location data

-

•

Full data extractions/user passcodes on iPhone 6/iOS 8.0 and later

-

•

FaceTime call content

-

•

iMessage content

3.3.1 Jailbreaking and Software Exploits

Jailbreaking

While jailbreaks are often used for customization purposes, the underlying technology can also be used by third parties in order to bypass software protection features of a target device, for example to bypass passcode lock screens or to forensically extract data from a locked device. Indeed, many commercial forensics packages make use of public jailbreaks released for this purpose . A commonality among these techniques is the need to deliver an “exploit payload” to a vulnerable software component on the device. Viable delivery mechanisms include physical ports such as the device’s USB/Lightning interface (much less viable if USB Restricted Mode is active ). Alternatively, exploits may be delivered through data messages that are received and processed by iOS and app software, for example specially-crafted text messages or web pages. In many cases, multiple separate exploits are combined to form an “exploit chain:” the first exploit may obtain control of one software system on the device, while further exploits may escalate control until the kernel has been breached. Once the kernel has been exploited, jailbreaks usually deploy a patch to allow unsigned code to run or to initiate custom behavior such as extraction of the filesystem .

Jailbreaking is a keystone of constructing bypasses to access user data due to the fact that the iOS kernel is ultimately tasked with managing and retrieving sensitive data. As such, a kernel compromise often allows the immediate extraction of any data not explicitly protected by encryption using keys which cannot be derived from the application processor alone. Publicly-known jailbreaks are released by a seemingly small group of exploit developers. Jailbreaks are released targeting a specific iOS version, and more rarely target specific hardware (e.g. iPhone model). Apple periodically releases software updates which patch some subset of the vulnerabilities distributed in these jailbreaks , and a process ensues in which exploit developers replace patched vulnerabilities with newly discovered, still-exploitable alternatives, until a major software change occurs (e.g. a new iOS major version). Table 3.4 provides the highlights of the history of jailbreaking in iOS, with many of these iterative updates omitted.

Jailbreaking was relatively popular in 2009. Exact counting is nearly impossible, but it was estimated that 10% of iOS devices in 2009 were jailbroken . In 2013, roughly 23 million devices ran Cydia , a popular software platform commonly used on jailbroken iOS devices . 150 million iPhones were sold that year and total iPhone sales accelerated tremendously between 2009 and 2013 , and as such it is speculatively likely, though hard to measure, that the percentage of jailbroken devices declined notably. Some analysis has been undertaken as to reasons for declining jailbreaking, if this even is a trend . Around 2016 (refer to Table 3.4) there was a marked transition in jailbreaks away from end-user usability and towards support for use by security researchers. Some of the more well-maintained jailbreaks did include single-click functionality or re-jailbreaking after reboot via a sideloaded app. It is possible that this transition took place in part due to the commercial viability of jailbreak production. As the market is relatively inflexibly supplied, prices for working jailbreaks increase directly with demand , and as such creators are less inclined to share exploits which are necessary for jailbreaking publicly. The kinds of exploits needed for forensics against a locked phone, specifically those which exploit an interface on the locked phone (commonly the USB/Lightning interface and related components), would be highly valuable to a forensics software company which at a given time did not have a working exploit of their own.

The checkm8/checkra1n jailbreak exploits seem to be widely implemented in forensic analysis tools in 2020. These exploits work on iOS devices up to iPhone X (and any with A11 hardware iterations) regardless of iOS version and as such are widely applicable and thus useful . Cellebrite Advanced Services (their bespoke law enforcement investigative service) offers Before-First-Unlock access to iPhones X and earlier running up to the latest iOS , and as such we are relatively certain they are employing checkm8.

Although they do not refer to it as jailbreaking, the exploits used in the Cellebrite UFED Touch and 4PC products either exploit the backup system to initiate a backup despite lacking user authorization, or exploit the bootloader to run custom Cellebrite code which extracts portions of the filesystem . We categorize these as equivalent due to the practical implementation and impact similarities.

Passcode guessing

To access records on devices that are not in the AFU state, or to access data that has been protected using the CP class, actors may need to recover the user’s passcode in order to derive the necessary decryption keys.

There are two primary obstacles to this process: first, because keys are derived from a combination of the hardware UID key and the user’s passcode, keys must be derived on the device, or the UID key must be physically extracted from silicon. There is no public evidence that the latter strategy is economically feasible. The second obstacle is that the iPhone significantly throttles attackers’ ability to conduct passcode guessing attacks on the device: this is accomplished through the use of guessing limits enforced (on more recent phones) by the dedicated SEP processor, as well as an approximately 80 millisecond password derivation time enforced by the use of a computationally-expensive key derivation function.

In older iPhones that do not include a SEP, passcode verification and guessing limits were enforce by the application processor. Various bugs in this implementation have enabled attacks which exploit the passcode attempt counter to prevent it from incrementing or to reset it between attempts. With four- and six-digit passcodes, and especially with users commonly selecting certain passcodes , these exploits made brute-forcing the phone feasible for law enforcement. One particularly notable example of passcode brute-forcing from a technical perspective was contributed by Skorobogatov in 2016 . In this work, the authors explore the technical feasibility of mirroring flash storage on an iPhone 5C to enable unlimited passcode attempts. Because the iPhonee 5C does not include a SEP with tamper-resistant NVRAM, the essence of the attack is to replace the storage carrying the retry counter in order to reset its value. The authors demonstrate that the attack is indeed feasible, and inexpensive to perform. Even earlier attacks include cutting power to the device when an incorrect passcode is entered to preempt the counter before it increments , although these have largely been addressed.

For extremely strong passwords (such as random alphanumeric passcodes, although these are relatively rarely used ), the 80ms guessing time may render passcode guessing attacks completely infeasible regardless of whether the SEP exists and is operating. For lower-entropy passcodes such as the default 6-digit numeric PIN, the SEP-enforced guessing limits represent the primary obstacle. Bypassing these limitations requires techniques for overcoming the SEP guessing limits. We provide a detailed overview of the evidence for and against the in-the-wild existence of such exploits in §3.3. Refer to Table 3.3 for estimated passcode brute-force times under various circumstances.

| Passcode Length | 4 (digits) | 6 (digits) | 10 (digits) | 10 (all) |

| Total Passcodes | ||||

| Total Allowed | 9,276 | 997,090 | - | - |

|

80 ms/attempt

in expectation |

12.37 minutes

6.19 minutes |

22.16 hours

11.08 hours |

25 years

12 years |

|

|

10 mins/attempt

in expectation |

70 days

35 days |

20 years

10 years |

200,000 yrs. |

Exploiting the SEP

The Secure Enclave Processor is a separate device that runs with unrestricted access to pre-configured regions of memory of the iOS device and as such a SEP exploit provides an even greater level of access than an OS jailbreak. Moreover, exploitation of the SEP is the most likely means to bypass security mechanisms such as passcode guessing limits. In order to interface with the SEP with sufficient flexibility, kernel privileges are required , and thus a jailbreak is likely a prerequisite for SEP exploitation. In the case that such an exploit chain could be executed, an attacker or forensic analyst might gain unfettered access to the encryption keys and functionality used to secure the device, and thus would be able to completely extract the filesystem.

In 2018, Grayshift, a forensics company based in Atlanta, Georgia, advertised and sold a device they called GrayKey, which was purportedly able to unlock a locked or disabled iPhone by brute-forcing the passcode. Modern iPhones allegedly exploited in leak GrayKey demonstration photos included a SEP, meaning that for these phones, brute-force protections should have prevented such an attack. As such, we speculate that GrayKey may have embedded not only a jailbreak but also a SEP exploit in order to enable this functionality. Other comparable forensic tools seemed only able to access a subset of the data which GrayKey promised at the time which provides additional circumstantial evidence that SEP exploits, if they existed, are rare.

A 2018 article from the company MalwareBytes provided an alleged screenshot of an iPhone X (containing a SEP) running iOS 11.2.5 (latest at the time) in a before-first-unlock state (all data in the AFU and CP protection classes encrypted, with keys evicted from memory and thus unavailable to the kernel). The images indicate that the GrayKey exploit had successfully executed a guessing attack on a 6-digit passcode, with an estimated time-to-completion of approximately 2 days, 4 hours. The images also show a full filesystem image and an iTunes backup extracted from it, which should only be possible if the passcode was known or somehow extracted. As the time to unlock increases with passcode complexity, we presume that GrayKey is able to launch a brute-force passcode guessing attack from within the exploited iOS, necessitating a bypass of SEP features which should otherwise prevent such an attack. Figure 3.11 depicts the Graykey passcode guessing and extraction interfaces.

In August 2018, Grayshift unlocked an iPhone X with an unknown 6-digit passcode given to them by the Seattle Police Department; Grayshift was reportedly able to break the passcode in just over two weeks . Further documents imply extensive use of Graykey to recover passcodes against iOS devices.

|

|

In January 2020, an FBI warrant application indicates that GrayKey was used to access a visibly locked iPhone 11 Pro Max . The significance of this access is twofold: the iPhone 11 Pro Max, released with iOS 13, is not vulnerable to the checkm8 jailbreak exploit , and the iPhone 11 includes a SEP. The apparent success of this extraction indicates that Grayshift possessed an exploit capable of compromising iOS 13. There is simply not enough information in these warrants to know if this exploit simply performed a jailbreak and logical forensic extraction of non-CP encrypted data, or if it involved a complete compromise of the SEP and a successful passcode bypass.